![]() ou may have noticed that synthesized voices are becoming more commonplace. Hundreds, if not thousands of electronic toys and gadgets speak to their owners in robotic voices. Whether it is a talking baby doll, a talking pedometer, or a new automated telephone system, several products that use text to speech are released every day.

ou may have noticed that synthesized voices are becoming more commonplace. Hundreds, if not thousands of electronic toys and gadgets speak to their owners in robotic voices. Whether it is a talking baby doll, a talking pedometer, or a new automated telephone system, several products that use text to speech are released every day.

Text-to-Speech (TTS), also known as speech synthesis, is the process in which typed text is transformed into audible speech. This is preferable to pre-recorded text in which it must be known ahead of time exactly what must be said. With text-to-speech there are opportunities to introduce information that is dynamic. The dynamic information could be from a database or a case where text spoken by a user is repeated for confirmation.

Experimenting with Text-to-speech

If you have never seen (or rather, heard) text-to-speech in action, you may want to download a free copy of ReadPlease 2003. The product reads text from the Windows clipboard. To use it, you simply paste some text into the ReadPlease editor (see Figure 1) and?assuming your PC speakers are turned on?you’ll hear the text spoken back to you. Currently, the product only works with all Windows desktop OSs, versions, but there are plans to release versions for Mac, Unix, Palm, and Windows CE operating systems as well.

The interesting thing about the ReadPlease application is that you can use the ReadPlease editor to experiment with your text-to-speech preferences. For example, you can adjust the speed in which text is spoken by moving the Speed slider control seen in Figure 1 up and down. You can also change the voice used by clicking the arrow buttons underneath the face picture icon.

| What You Need |

| Visual Studio .NET 2003, Microsoft Speech Application SDK, 1.1 |

| ? |

| Figure 1. The ReadPlease 2003 Application: This Windows application reads any text pasted into the edit field from the clipboard. |

Clicking the Tools menu and then selecting Options allows you to experiment further with the text-to-speech editor, adjusting how long the speech engine pauses between paragraphs for example. Upgrading to the ReadPlease Plus version gives you access to additional options such as a pronunciation editor, which lets you specify how a particular word will be pronounced. The ReadPlease Plus version also includes a taskbar you can dock at the top of your Windows Desktop. So, you can quickly drag text from any text-based application into the taskbar and have it read to you.

By default, the ReadPlease application uses the built-in Microsoft voices (Mark, Mike, Sam, or Marilyn). But, you can optionally purchase higher-quality AT&T Natural Voices, such as those in the AT&T Natural Voices Starter Pack ($25.00 US). The Starter Pack includes the 8K versions of “Mike” and “Crystal,” both of which sound much better than the default Microsoft voices.

| Author’s Note: The term 8K means that the sample rate used to create the wave file occurred at 8000 bits per second. Another format is 16k which results in a clearer and more natural sounding voice. Basically the higher the sample rate, the better the voice quality. |

If you’re interested, you can hear a sample of these and other voices.

Microsoft Speech Application SDK 1.1

In 2004, Microsoft released Microsoft Speech Server along with a free SDK that lets you develop Web-based speech applications that run on Speech Server. You can use the SDK to build telephony or voice-only applications in which the computer-to-user interaction is done using a telephone. You can also build multimodal applications in which users can choose between using speech or traditional Web controls as input.

The Microsoft text-to-speech engine synthesizes text by first breaking down the words into phonemes. Phonemes are the basic elements of human language. They represent a set of “phones,” which are the sounds that form words. The text-to-speech engine then analyzes the extracted phonemes and converts them to symbols used to generate the digital audio speech.

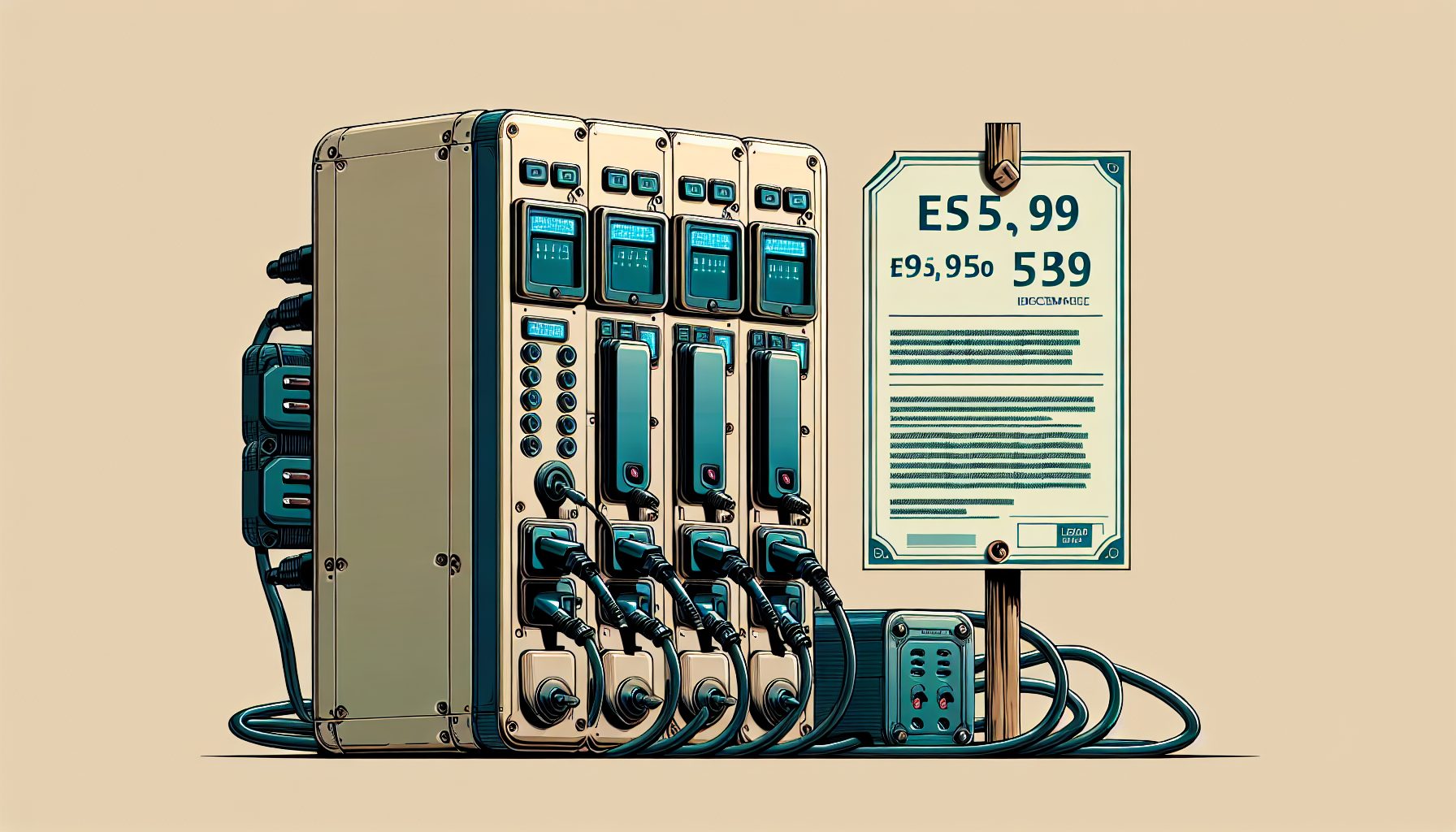

You can use the downloadable sample application (ExploringTextToSpeech.csproj) that accompanies this article to experiment with configurable aspects of the Microsoft text-to-speech engine. The multimodal application contains one Web page (see Figure 2) into which you enter some text. You can then click a button to hear the text read in one of the following ways:

| Author’s Note: In cases where the text to be spoken is not known ahead of time, using a text-to-speech engine is unavoidable; however you can generally get better quality from recorded audio. When audio quality is critical, you can use the Microsoft Speech Application Software Development Toolkit (SASDK) to record audio. For example, you may want to use recorded audio to prompt users for information. The recorded audio can be broken out into a series of prompts that are concatenated together at runtime. |

|

- Speak Text Normally?Provides a benchmark for testing

- Say as an Acronym?The text, “ASP” is spoken as, “A.S.P.”

- Say as Name?Mr. John Doe is pronounced as “Mister John Doe”

- Say As Date?In this case, date is formatted as month, day, year

- Say as Web Address?In this case, the text is formatted as a Universal Resource Identifier (URI)

- Say as Digits?A number entered as text is spoken as a series of digits

- High Pitch/Slow Rate?The text is read with a high pitch and a slow rate

- Rate Fast/Volume Loud?The text is read with a fast rate and loud volume

- Low Pitch/Volume Soft?The text is read with a low pitch and a soft volume

| ? |

| Figure 2. Sample Application: You can use this multimodal application to hear text spoken in a variety of ways. |

Multimodal applications use a prompt control to specify audio that is played to a user. The prompt control contains an InlineContent property that may contain either a Content or a Value Basic Speech Control. The Content control specifies a specific prompt file containing stored audio recordings. The Value control specifies elements from an HTML Web page. The sample application uses a Value control that references the input element named txtText (the “Type some text here:” field in Figure 2). Here’s the HTML that represents the markup for a prompt:

Speech Synthesis Markup Language

The text-to-speech engine makes certain default assumptions regarding how to speak text referenced by the InlineContent property, but developers can control the way the text-to-speech engine renders the audio by using Speech Synthesis Markup Language (SSML) elements. SSML is an XML-based markup language based on recommendations made by the World Wide Web (W3C) Consortium. Table 1 lists the SSML elements that are supported by the SASDK.

Table 1. Supported SSML Elements: The table lists the SSML elements supported by the SASDK and used to control the way text is rendered by the text-to-speech engine.

| SSML Element | Description |

| ssml:paragraph/ssml:sentence | Used to separate text into sentences and paragraphs. |

| ssml:say-as | Used to specify the way text is spoken. It accepts several different attributes to identify the type of text. |

| ssml:phoneme | Used to control the way a word is pronounced. |

| ssml:sub | Used to specify a substitute word or phrase in place of the specified text. |

| ssml:emphasis | Used to increase the emphasis placed on a word or phrase. |

| ssml:break | Used to add pauses between certain words in a text. |

| ssml:prosody | Used to control the pitch, rate, and volume of the text. |

| ssml:audio | Used to insert recorded audio files. |

| ssml:mark | Used to insert a mark at a certain point in the text. This mark can then be used to signify an event or to trigger an action. |

The sample application illustrates the say-as and prosody SSML elements in action. Each button on the Default.aspx page corresponds to a prompt control. These prompt controls include an ssml:say-as or an ssml:prosody element within the InlineContent element. Here’s an example of the HTML markup for one of these elements:

Prompts start when the user clicks one of the buttons, which executes JavaScript such as the following:

function SayAsAcronym() { prmSayAsAcronym.Start(); } In the example above, the prompt named prmSayAsAcronym includes the ssml:say-as element, which specifies that any text contained within the txtText input element should be spoken as an acronym. So, if you were to type “ASP” into the text element and click “Say As Acronym,” the text to speech engine would read each individual letter.

To experiment with the sample application, take some time to enter different snippets of text and then click each of the buttons to see how the text-to-speech engine interprets the text. I urge you to change the element values and experiment with the way each control is rendered. The SASDK offers developers a fine level of control over how the text-to-speech engine renders text, so experimentation can result in a more natural sounding speech-based application.