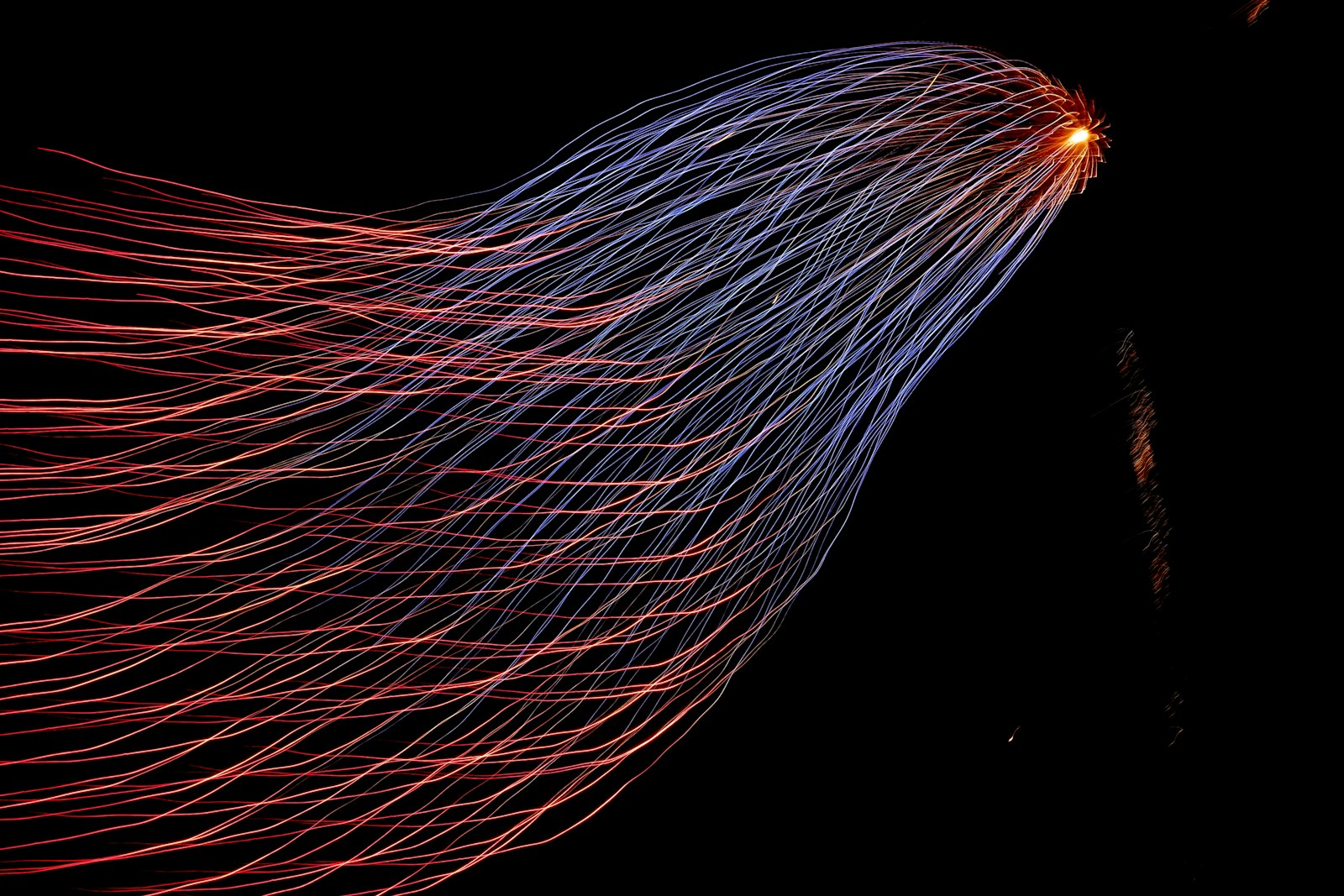

In large-scale systems, one request often triggers many parallel tasks. That pattern is called fan-out. Once those tasks finish, the system gathers the results back together through fan-in.

These two patterns are everywhere in distributed systems. Search engines use them to query many shards at once. Microservices use them to assemble API responses from multiple backends. Data platforms use them to split jobs across workers and merge the output later.

At a simple level, fan-out spreads work across many components, and fan-in collects the results into one outcome.

Why These Patterns Matter

The main benefit is speed. Instead of making five service calls one after another, a system can run them in parallel and wait only for the slowest one. That can cut latency dramatically.

This is one reason modern architectures rely so heavily on fan-out. A homepage request, for example, might need user data, recommendations, ads, and inventory status. Running those in sequence is slow. Running them in parallel is much faster.

Fan-in is what makes that parallelism useful. It merges those separate responses into one payload the user can actually consume.

Where You See Fan-Out and Fan-In

A search engine fans a query out to many index shards, then fans the results back in to rank and merge them.

A microservices app might send one request to profile, billing, inventory, and recommendation services, then combine the responses into one API result.

A data processing framework does the same thing on a larger scale. It distributes tasks across many workers, then aggregates the outputs into a final dataset.

The Tradeoffs

These patterns improve performance, but they also make systems harder to operate.

The biggest issue is tail latency. When one request depends on many downstream calls, the slowest dependency often determines the total response time.

Fan-out also amplifies the load. One incoming request might trigger 10, 20, or 50 backend calls. At scale, that multiplication can overwhelm services fast.

Fan-in introduces coordination problems, too. Systems have to decide what to do with partial failures, slow responses, retries, and timeouts.

That is why resilient systems use safeguards like timeouts, circuit breakers, backpressure, and partial responses.

A Simple Example

Imagine one API request fans out to 10 services, and each service makes 2 database calls.

That means one user request creates 20 backend operations.

Now multiply that by 5,000 requests per second, and you get 100,000 backend operations per second.

This is why fan-out needs limits. It is powerful, but it can become expensive very quickly.

Honest Takeaway

Fan-out and fan-in are core patterns in distributed systems. They let you parallelize work, reduce latency, and scale complex applications.

But they also increase coordination overhead, failure risk, and infrastructure load. The real challenge is not understanding the pattern. It is knowing when to use it, and when to keep things simpler.

Senior Software Engineer with a passion for building practical, user-centric applications. He specializes in full-stack development with a strong focus on crafting elegant, performant interfaces and scalable backend solutions. With experience leading teams and delivering robust, end-to-end products, he thrives on solving complex problems through clean and efficient code.