Microservices: How to Scale Without Creating Cascading Failures

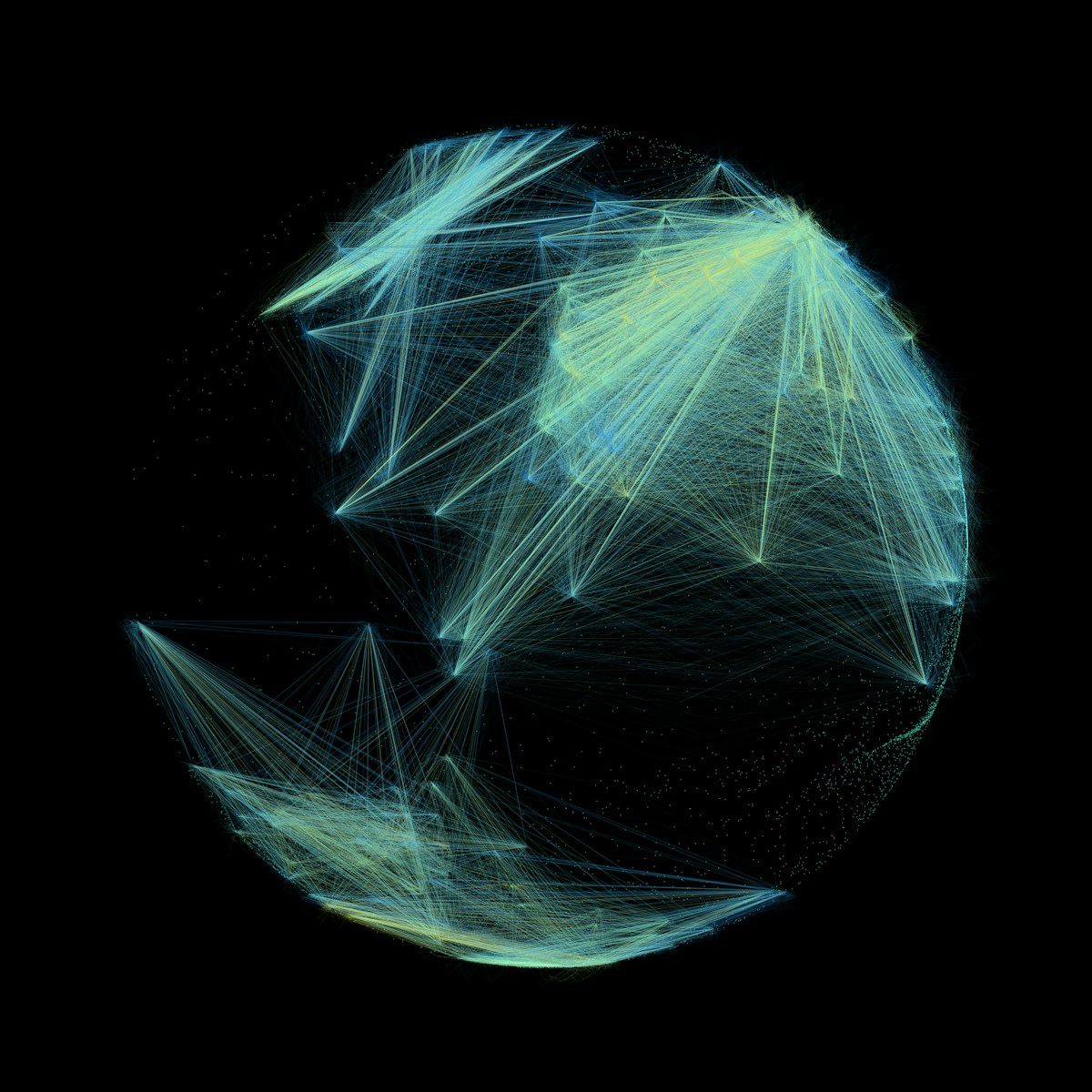

You usually do not notice a cascading failure at the moment it begins. You notice it when one sleepy dependency turns your healthy graph into a crime scene. Latency creeps

You usually do not notice a cascading failure at the moment it begins. You notice it when one sleepy dependency turns your healthy graph into a crime scene. Latency creeps

You usually do not run out of database storage because the business grew exactly as planned. You run out because three small things compound quietly. Indexes grow faster than tables.

Most teams do not start with a data capture pipeline problem. They start with a product problem that quietly turns into a data problem. A customer updates an address, your

You’ve seen the pattern. A team schedules a “big architecture review,” produces polished diagrams, maybe even refactors a few services, and then six months later, the system is harder to

You usually realize your container platform is “scaled” at the exact moment it is not. A launch hits, latency doubles, pods start churning, the queue backs up, and somebody says

Every platform migration starts with a clean diagram and ends in the parts of the system nobody modeled. The hard part is rarely moving bytes from one place to another.

If you’ve shipped anything with LLMs or real-time inference, you’ve already learned this the hard way: AI latency is not just about speed, it’s about variance. Your P50 looks great

The ugly part of indexing large tables is not the SQL. It is the blast radius. On a small table, adding an index feels harmless. On a table with hundreds

Most engineering teams do not miss deadlines because they are lazy, or bad at estimating, or mysteriously cursed. They miss them because they plan against fantasy capacity. The roadmap assumes