Understanding Circuit Breaker Patterns for Resilience

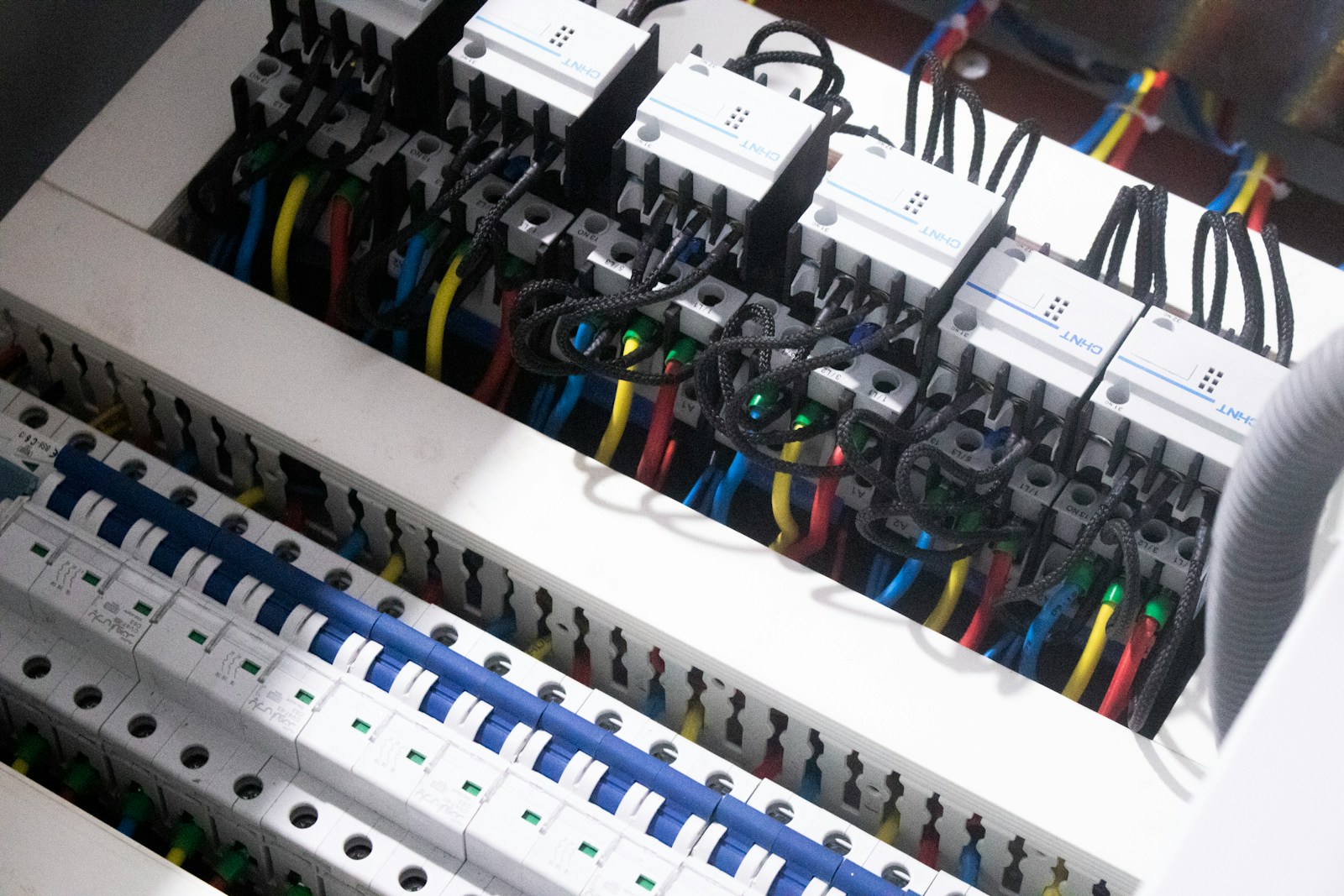

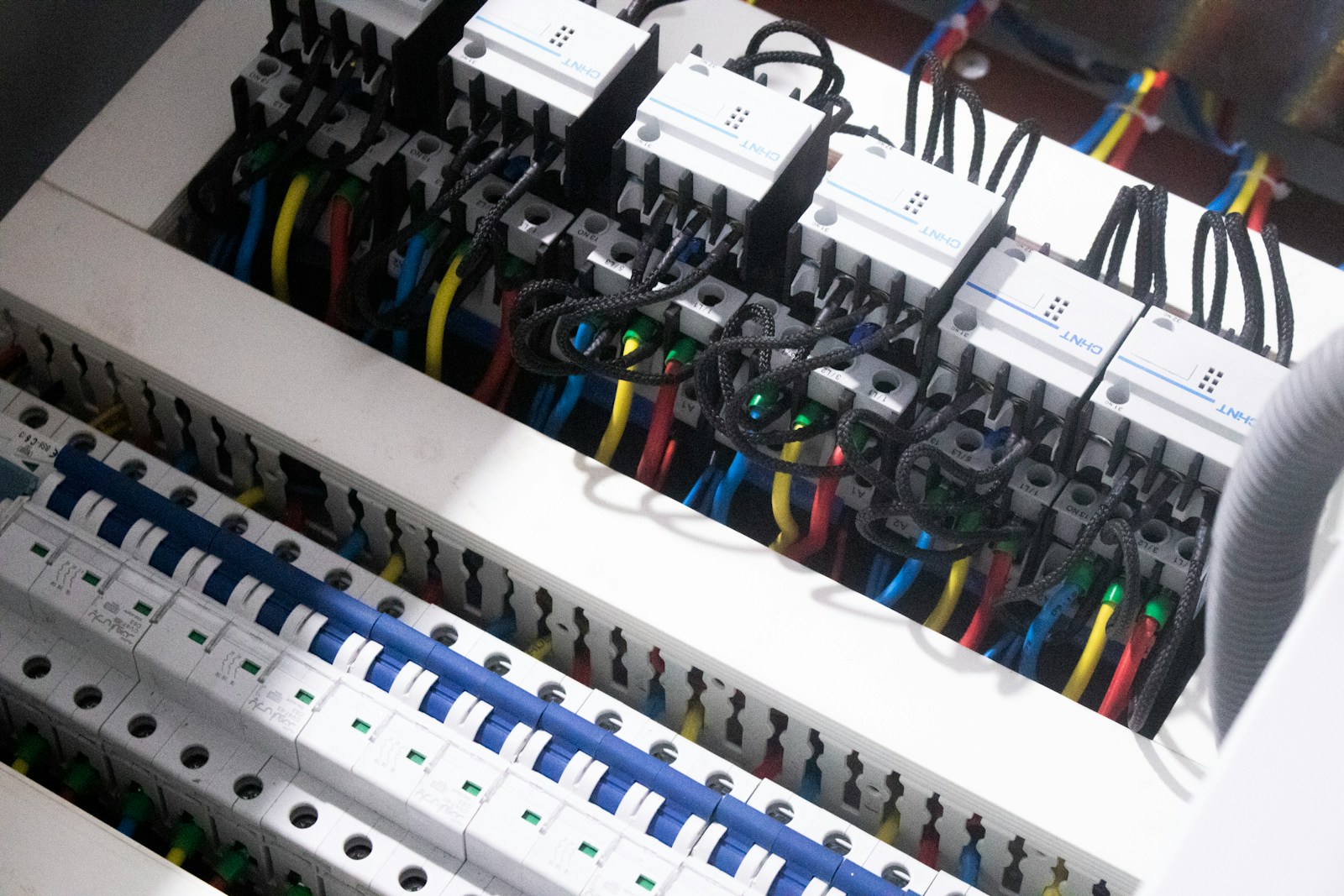

You don’t notice resilience when everything works. You notice it when things break, and your system doesn’t. Picture this: your API depends on a payment service. That service slows down.

You don’t notice resilience when everything works. You notice it when things break, and your system doesn’t. Picture this: your API depends on a payment service. That service slows down.

You don’t notice message queues when everything works. Orders flow, notifications arrive, services respond in milliseconds. Then one dependency slows down, a spike hits your API, and suddenly your “distributed

If you’ve ever pulled the plug on a database mid-write and still found your data intact afterward, you’ve already benefited from write-ahead logging. It’s one of those systems that rarely

Most teams ask this question too late. They ask for it after Datadog, Grafana Cloud, New Relic, or Splunk bills become uncomfortable, or after a homegrown Prometheus, Loki, Tempo, or

Winning a new client feels great, but what about keeping them? That is where the real challenge begins. Agencies pour resources into pitches, onboarding, and creative execution, but one thing

Container networking is one of those topics that looks simple right up until the first incident. Your app starts fine, the pod is healthy, the service exists, DNS resolves, and

After mentoring dozens of Staff and Principal engineers, a pattern shows up with uncomfortable consistency. You’ve built credibility through execution, you see systemic issues others miss, and yet your impact

If you have spent time operating distributed systems in production, you have likely felt the gap between architectural diagrams and reality. Systems that look clean in design reviews accumulate coordination

You usually do not notice that your architecture is over-abstracted when you are introducing it. It feels like progress. You are generalizing patterns, removing duplication, and future-proofing the system. Then