Network Optimization for Large-Scale Systems

You do not notice network performance when it works. You only notice it when your dashboards light up red at 2:13 a.m., latency spikes across regions, and someone in finance

You do not notice network performance when it works. You only notice it when your dashboards light up red at 2:13 a.m., latency spikes across regions, and someone in finance

High-performing AI platform teams rarely fail because of model quality alone. They fail in the seams between experimentation and production. You have seen it. A promising model in a notebook

At some point, every microservices platform hits the same wall: you are not debugging a service anymore, you are debugging the conversations between services. Latency spikes only for certain callers.

If you have ever been on a 2:17 a.m. bridge with fifteen engineers staring at Grafana, you know incident response is not just about alerts. It is about the architecture

You know the pattern. Dashboards look “fine,” CPU is hovering at 55 percent, error rates are flat, and yet Slack is filling up with screenshots of spinning loaders. Users say

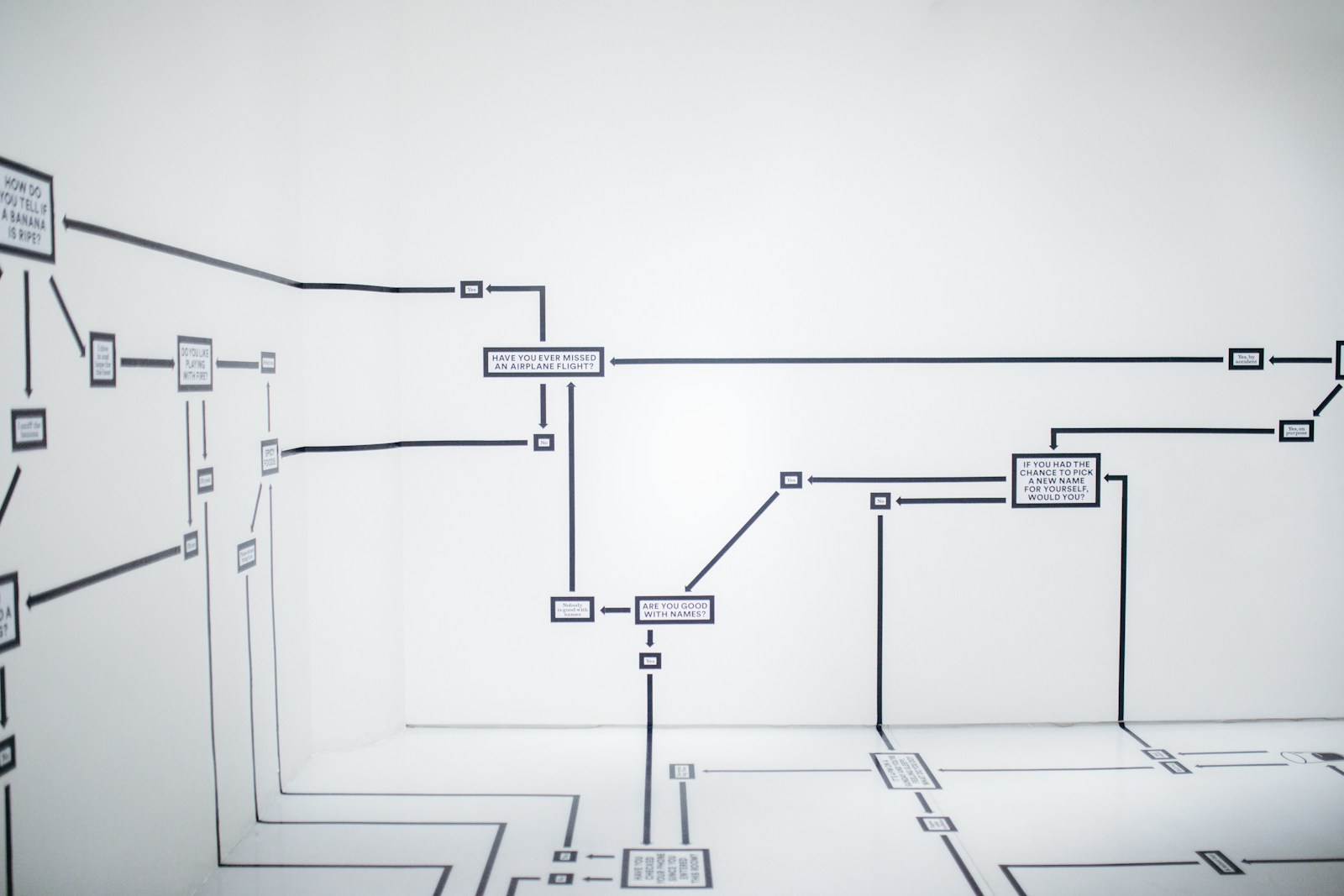

Performance incidents rarely fail because of missing dashboards. They fail because the investigation path is unclear, the signal is buried, and the system behaves in ways your mental model does

You can refactor code. You can swap frameworks. You can even migrate entire stacks over a long weekend if you are brave and caffeinated enough. But if you get your

You rarely redesign a database because you are bored. You do it because something hurts. Query latency crept from 20 milliseconds to 800. A new product line does not fit

You have probably been in this meeting. The model is underperforming. Someone suggests the obvious fix: get more data. It sounds responsible and empirical. And sometimes it is exactly right.