The Circuit Breaker Pattern in Modern Systems

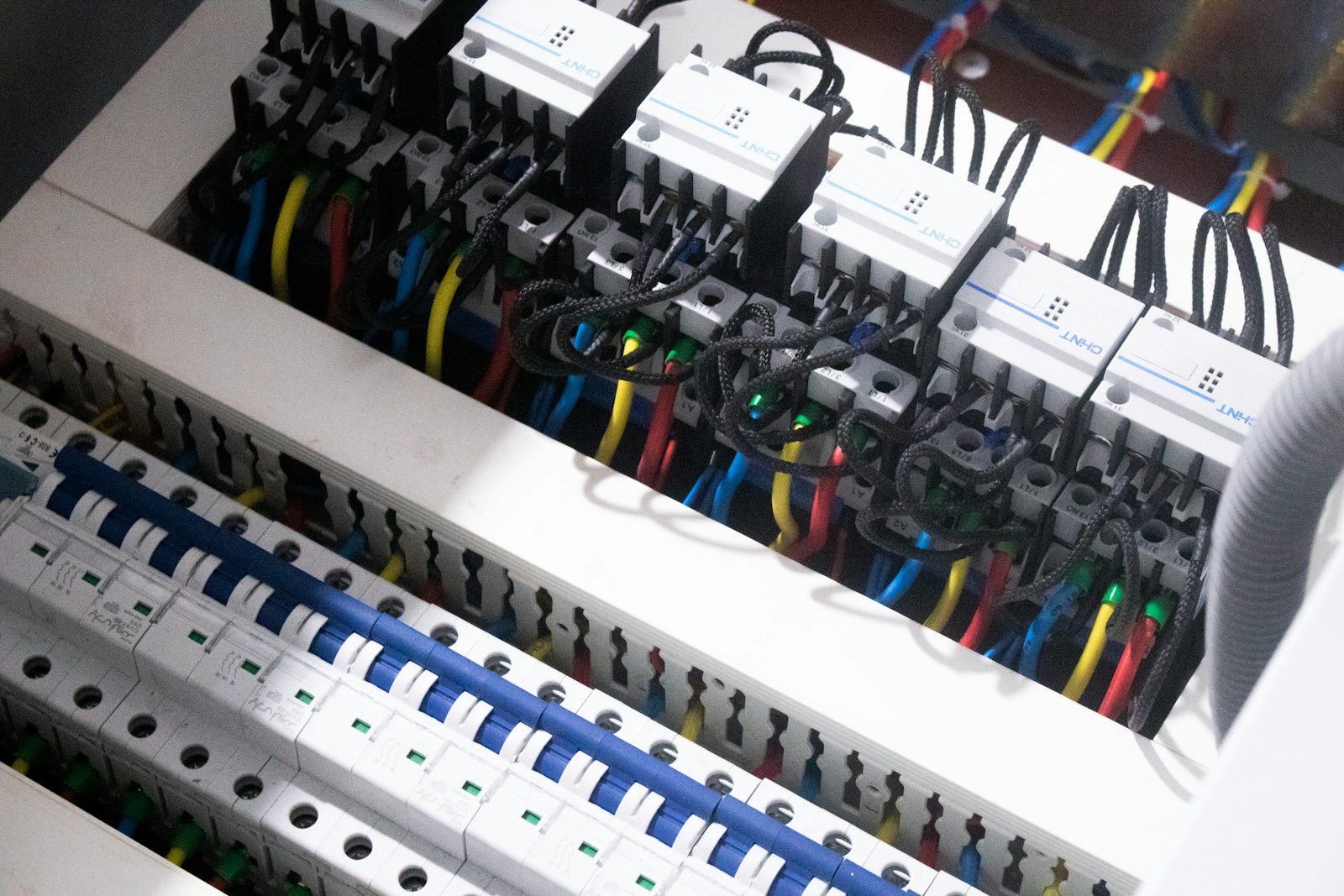

You ship a new feature. Traffic spikes. One downstream service slows down, then times out. Threads pile up. CPU climbs. Suddenly, your healthy service becomes part of the outage. If

You ship a new feature. Traffic spikes. One downstream service slows down, then times out. Threads pile up. CPU climbs. Suddenly, your healthy service becomes part of the outage. If

You usually discover your API is not fault-tolerant at the worst possible moment. A downstream service slows down. Latency climbs. Clients start retrying. Queues fill. Autoscaling kicks in too late.

Your first WebSocket feature usually ships as a small miracle. One server, a handful of clients, and suddenly your product feels alive. Then production traffic shows up with its own

You can benchmark query latency all day and still pick the wrong database. I have seen teams choose a database because it “felt faster” in a local test, only to

You usually notice PostgreSQL query problems the same way you notice a slow website. Everything technically works, but it feels sticky. A page load crept from 80 milliseconds to 800.

You add JSON to Postgres because reality is messy. Product catalogs sprout new attributes, event payloads evolve, and every team has “just one more field” they cannot predict ahead of

You only notice database connection retries when they fail. A deploy rolls out, a primary database flips during failover, and suddenly every application instance tries to reconnect at once. Latency

You know you do not have independent deployability when a “small” change turns into a release train. Someone updates orders, then payments needs a tweak, then notifications fails in staging,

Most load tests fail in a very specific, very predictable way. They test your system the way your load testing tool behaves, not the way your users behave. Real users