A new “agent step” for AI workflows promises to turn scattered tools into a coordinated system, offering teams a way to manage models, memory, and human input in one place. Built on lessons from an internal SDK known as Breadboard, the approach aims to make complex pipelines easier to build and maintain while improving reliability and control.

The concept centers on a single, configurable step that handles model selection, tool calls, dynamic routing, and memory. It also keeps a human in the loop where needed. The developers describe it as a practical answer to a common problem: stitching multiple AI models and utilities together without losing context or oversight.

What the Agent Step Does

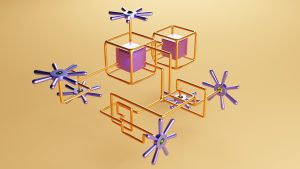

Building on lessons from an internal agent SDK called “Breadboard”, the agent step is not just another node in a workflow — it is an orchestration layer that can recruit models, invoke tools, manage memory, route dynamically, and interact with humans

At its core, the step acts like a coordinator. It can pick the right model for a task, call structured tools, track context across turns, and ask a person to review or approve actions. Each of these functions addresses a pain point that has slowed multi-model projects.

- Recruit models: Selects and switches models based on task or policy.

- Invoke tools: Calls APIs and functions with structured inputs and safeguards.

- Manage memory: Preserves key facts and decisions across steps.

- Route dynamically: Directs requests to the best path at runtime.

- Human interaction: Pauses for review on sensitive or high-impact actions.

Why It Matters for Developers

Teams often wire together chat models, vector stores, and custom tools using ad hoc glue code. That code can be brittle, hard to test, and difficult to scale. A unified step reduces that sprawl. It gives developers a single place to define policies, logging, and escalation rules.

Memory management is a standout feature. Many projects rely on long prompts or manual session storage. A built-in memory layer can store summaries, facts, and intermediate results. This helps prevent context loss, reduces token costs, and makes auditing easier.

Dynamic routing brings a similar benefit. Instead of hardcoding which model handles which job, the step can choose at runtime. Routing can depend on input type, confidence scores, cost ceilings, or service health. That flexibility helps teams adapt without major rewrites.

Balancing Speed With Oversight

Human-in-the-loop controls add guardrails for tasks that need judgment or compliance checks. The agent can request approval before sending emails, modifying data, or making purchases. This strikes a balance between automation and accountability.

Tool invocation also gets safer. Structured schemas can validate inputs and block risky actions. Clear logs of who approved what and when support audits and postmortems. For regulated sectors, those features are often mandatory.

Industry Context and Comparisons

The move reflects a wider trend: shifting from single-model chatbots to multi-step agents with tool use and memory. Frameworks across the industry are adding routing, tool calling, and state management. The difference here is the emphasis on treating the agent as a first-class step inside a workflow, rather than a standalone app.

In practice, that means teams can slot the agent into existing pipelines that handle data prep, retrieval, and delivery. Monitoring, retries, and incident response can live in the same workflow system. This reduces integration risk and speeds up deployment.

Potential Impact and Early Use Cases

Early targets include support automation, content review, financial operations, and internal IT tasks. These areas rely on structured tools, clear approvals, and sensitive data. A single step that standardizes memory, routing, and review can shorten build times and cut maintenance.

Cost control is another draw. Routing can push simple tasks to cheaper models and reserve advanced models for complex cases. Memory summaries can trim prompt length. Together, these tactics can reduce monthly bills without harming quality.

What to Watch Next

Key questions remain. How well does the routing perform under load? Can memory policies prevent drift or stale facts? How easy is it to plug in new tools and models? Answers will depend on benchmarks, governance features, and real-world deployments.

If the agent step proves reliable, it could become a standard building block for enterprise AI. It offers a place to set rules, log actions, and keep humans in control. For teams tired of brittle glue code, that is a practical path to scale.

The next phase will likely focus on guardrails, testing, and metrics. Clear dashboards for routing decisions, memory snapshots, and human approvals will be crucial. With those pieces in place, organizations can move faster while maintaining trust and traceability.

A seasoned technology executive with a proven record of developing and executing innovative strategies to scale high-growth SaaS platforms and enterprise solutions. As a hands-on CTO and systems architect, he combines technical excellence with visionary leadership to drive organizational success.