You don’t notice resilience when everything works. You notice it when things break, and your system doesn’t.

Picture this: your API depends on a payment service. That service slows down. Your threads pile up. Timeouts cascade. Suddenly, your entire system is unresponsive, not because your code is wrong, but because it trusted something it couldn’t control.

That’s exactly the class of failure the circuit breaker pattern is designed to prevent.

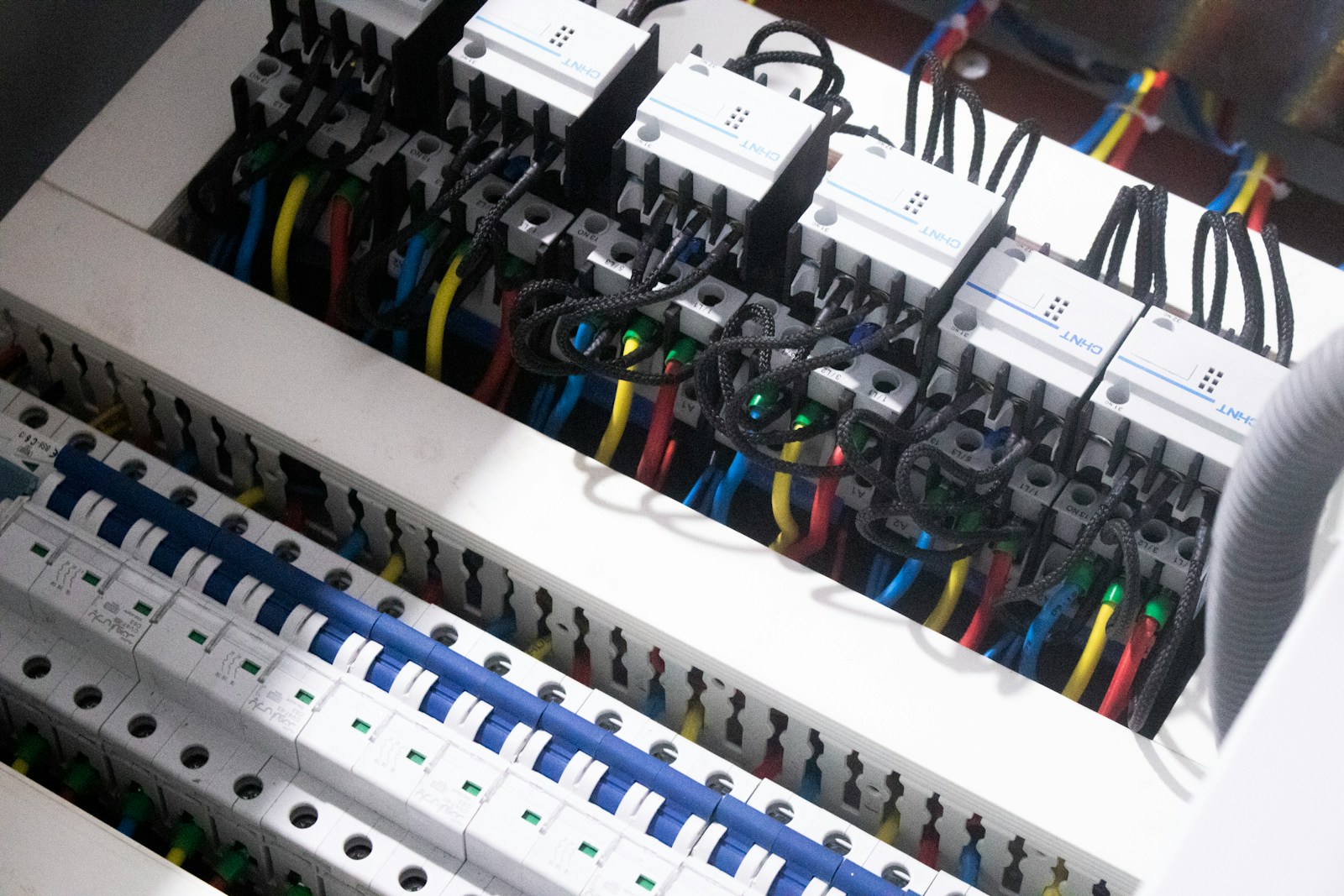

At its core, a circuit breaker is a defensive mechanism that stops your system from repeatedly calling a failing dependency. Instead of retrying endlessly, it “opens the circuit” and fails fast. It’s the software equivalent of an electrical breaker that cuts power when things go wrong.

What experts are actually saying about circuit breakers

We dug into how practitioners at companies running high-scale systems think about this pattern, and a few consistent themes emerged.

Martin Fowler, Software Architect and Author, frames circuit breakers as a feedback mechanism. When failures cross a threshold, the system should stop trying and give the dependency time to recover. The key insight is that resilience is not about retries, it’s about knowing when to stop retrying.

Hystrix (Netflix OSS team) popularized the pattern in production. Their engineers observed that most outages weren’t caused by a single failure, but by resource exhaustion from waiting on slow services. Circuit breakers reduced thread pool starvation and stabilized systems under load.

Nygard, Author of “Release It!”, emphasizes something engineers often overlook: failures are not rare events. They are normal conditions. Circuit breakers are less about handling edge cases and more about designing for the steady-state reality of partial failure.

Put those together and you get a clear takeaway: circuit breakers are not an optimization. They are a core control system for failure.

What a circuit breaker actually does under the hood

At a high level, a circuit breaker wraps calls to an external service and tracks outcomes. It transitions between three states:

- Closed: everything is normal, requests pass through

- Open: failures exceeded threshold, requests fail immediately

- Half-open: test phase, a few requests are allowed through

Here’s the key mechanism:

- Count failures over a rolling window

- Compare against a threshold (say 50% failures over 20 requests)

- If exceeded → open the circuit

- After a cooldown → allow limited test requests

- If successful → close the circuit again

This is fundamentally a control loop, not just an error handler.

Why this matters: without it, your system keeps hammering a failing dependency, increasing latency, tying up threads, and amplifying failure.

Why circuit breakers matter more than retries

Most systems start with retries. It feels intuitive: “just try again.”

But retries alone can make things worse.

Let’s say:

- Your service gets 1,000 requests per second

- Dependency failure rate jumps to 60%

- You retry each failed request 2 times

Now you’re sending:

- 1,000 original requests

- ~1,200 retry requests

That’s 2.2x load on a system already failing

Circuit breakers flip this behavior. Instead of amplifying pressure, they shed load.

This is the same philosophy behind internal linking in SEO systems, where structure helps systems discover and prioritize efficiently rather than brute-forcing everything. In distributed systems, circuit breakers play a similar role, guiding traffic intelligently instead of blindly retrying.

Where circuit breakers get tricky (and often misused)

The pattern sounds simple. The implementation is not.

1. Choosing the right thresholds

Too sensitive:

- Circuit opens too often

- You drop healthy traffic

Too lenient:

- You don’t prevent cascading failures

There’s no universal number. Teams often start with:

- Failure rate threshold: 50–70%

- Minimum request volume: 10–20

- Open timeout: 30–60 seconds

Then tune based on real traffic.

2. Handling partial failures

Not all failures are equal:

- Timeout vs 500 error vs rate limit

You might want:

- Timeouts → count as failures

- 429s → trigger backoff logic instead

- 500s → count selectively

If you treat everything the same, you lose signal.

3. The “silent failure” problem

When a circuit opens, requests fail fast. That’s good.

But if you don’t:

- log it

- alert it

- expose metrics

You’ve just created a failure that’s harder to detect.

This is similar to how backlinks act as signals of trust in search systems, where visibility and feedback loops matter more than raw volume. In resilience systems, observability plays the same role.

How to implement circuit breakers in practice

Let’s move from theory to something you can actually deploy.

Step 1: Wrap external dependencies, not internal logic

Focus on:

- HTTP calls

- database queries

- third-party APIs

Do not wrap pure functions or in-memory operations. That adds noise.

Most teams use libraries:

- Java: Resilience4j (modern), Hystrix (legacy)

- Node.js: opossum

- Python: pybreaker

- Step 2: Define failure signals clearly

Decide what counts as failure:

- Exceptions

- Timeouts

- specific status codes

Pro tip: start strict, then relax based on data.

Step 3: Configure fallback behavior

When the circuit is open, what happens?

Common patterns:

- return cached data

- return default response

- degrade features (read-only mode)

Example:

def get_user_profile(user_id):

try:

return user_service_call(user_id)

except CircuitOpenError:

return cached_profile(user_id)

Fallbacks are where user experience is saved or lost.

Step 4: Add observability from day one

Track:

- circuit state (open/closed)

- failure rate

- latency

Without this, you’re flying blind.

One short list that actually matters:

- error rate per dependency

- circuit open frequency

- fallback usage rate

If fallback usage spikes, your system is degraded even if it “works.”

Step 5: Combine with other resilience patterns

Circuit breakers don’t live alone.

They work best with:

- timeouts

- retries with backoff

- bulkheads (resource isolation)

Think of it as a layered defense system, not a single fix.

A real-world mental model that sticks

Think of your system like a busy restaurant kitchen.

- Orders = requests

- External service = ingredient supplier

If the supplier is late:

- Without a circuit breaker → chefs keep waiting, kitchen stalls

- With a circuit breaker → stop taking orders needing that ingredient, serve alternatives

The goal is not perfection. It’s controlled degradation.

FAQ: What engineers usually get wrong

When should I NOT use a circuit breaker?

If the dependency is:

- extremely reliable

- low latency

- non-critical

Adding a breaker might add unnecessary complexity.

Do circuit breakers replace retries?

No. They complement retries.

Retries handle transient failures.

Circuit breakers handle persistent failures.

How is this different from rate limiting?

Rate limiting protects your system.

Circuit breakers protect you from other systems.

Honest takeaway

Circuit breakers sound like a small pattern, but they fundamentally change how your system behaves under stress.

They force you to accept a hard truth: failure is normal, and resilience is about managing it, not avoiding it.

If you implement them well, you won’t notice them during normal operation. But the first time a dependency melts down, and your system stays responsive, you’ll realize they’re one of the highest-leverage patterns in distributed systems.

The real work is not adding a library. It’s tuning thresholds, designing fallbacks, and building observability. That’s where resilience is either earned or quietly lost.

Senior Software Engineer with a passion for building practical, user-centric applications. He specializes in full-stack development with a strong focus on crafting elegant, performant interfaces and scalable backend solutions. With experience leading teams and delivering robust, end-to-end products, he thrives on solving complex problems through clean and efficient code.