You have seen it play out. A candidate navigates a textbook system design interview flawlessly, name checks Kafka, sketches a clean microservices diagram, discusses CAP tradeoffs, and still struggles six months into the role. Or the opposite. Someone who fumbles through the whiteboard builds resilient systems in production. The gap is not accidental. System design interviews optimize for performance under artificial constraints, while real engineering success depends on navigating ambiguity, legacy constraints, and organizational complexity over time.

What follows is not a critique of system design interviews as a concept, but a grounded look at why they often fail as predictors. More importantly, it is a guide to what signals actually matter when you are hiring or evaluating senior engineers responsible for systems that evolve under real load, real failures, and real business pressure.

1. They reward narrative coherence over operational reality

In an interview, a clean story wins. Candidates present well-structured architectures with clear boundaries, elegant scaling strategies, and tidy failure handling. In production, systems rarely behave that way.

The strongest engineers are not the ones who can tell the cleanest story. They are the ones who can reason through messy, partially degraded states. Think about Amazon’s Dynamo evolution, where real-world tradeoffs around consistency and availability forced continuous adaptation. That kind of thinking rarely surfaces in interviews because candidates are not penalized for ignoring operational entropy.

The result is a bias toward articulate architects rather than engineers who can debug distributed failure at 3 a.m. under incomplete observability.

2. They compress time and eliminate system evolution

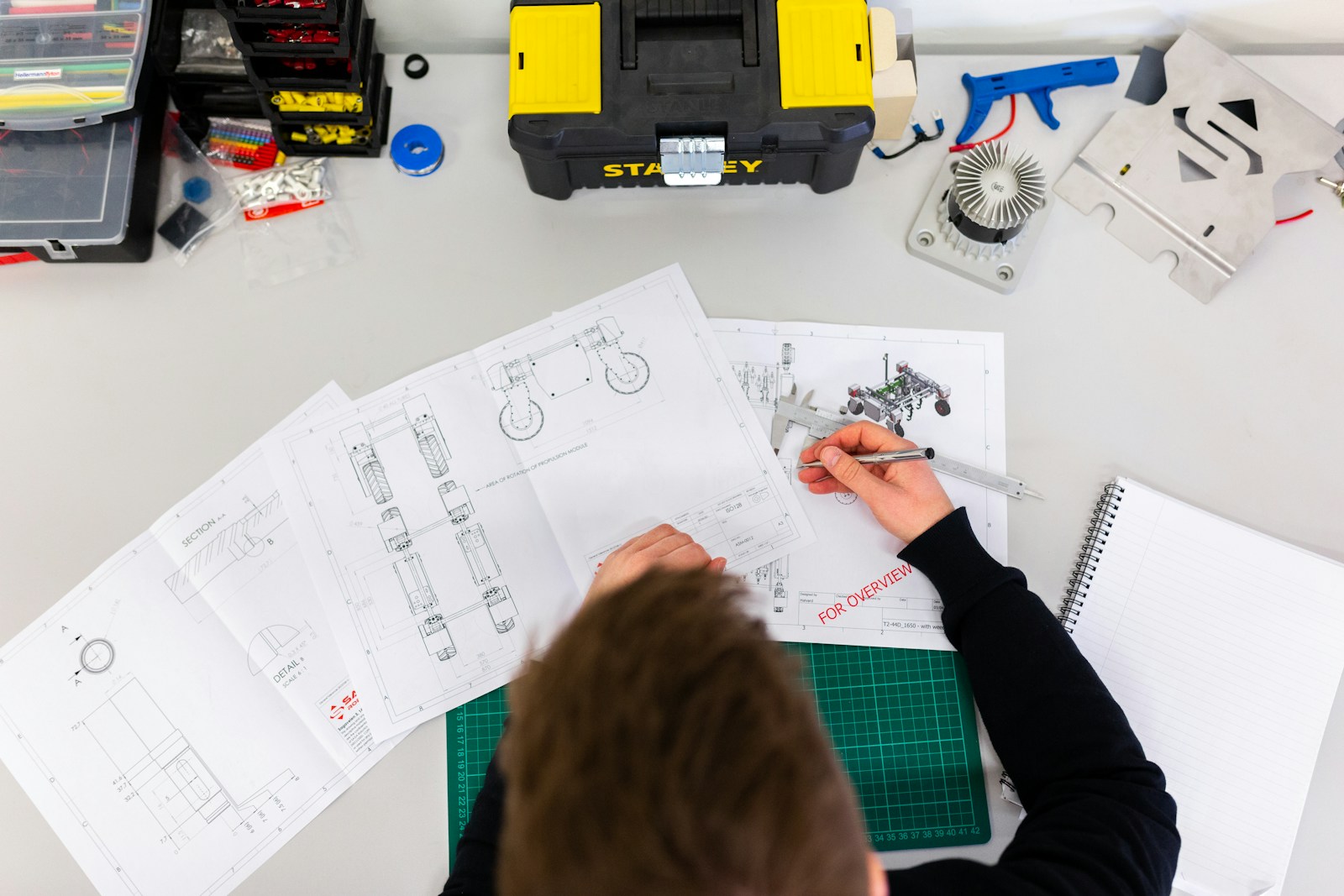

A 45-minute interview forces candidates to design a system in a static snapshot. Real systems evolve over quarters and years.

In practice, the hardest problems are not initial design decisions but how those decisions age. Schema migrations, backward compatibility, and incremental rewrites dominate senior engineering work. Consider Netflix’s migration from monolith to microservices, which took years of iterative decomposition, tooling investment, and organizational change.

Interviews rarely ask how a system evolves from version 1 to version 7 under live traffic. That omission removes one of the strongest predictors of long-term performance: the ability to manage change without breaking production.

3. They ignore organizational and socio-technical constraints

System design interviews assume you have full control. Real engineers operate inside constraints imposed by teams, org structure, and business priorities.

A design that looks optimal on a whiteboard may be unbuildable in a team with:

- Limited platform maturity

- Fragmented ownership boundaries

- Inconsistent deployment pipelines

- Conflicting stakeholder priorities

Senior engineers succeed by navigating these constraints, not ignoring them. The ability to align architecture with organizational reality often matters more than selecting the theoretically optimal data store or messaging system.

This is where interviews systematically mislead. They reward idealized design thinking while production rewards constraint-aware execution.

4. They over-index on known patterns instead of problem framing

Most candidates prepare by memorizing canonical architectures: rate limiters, news feeds, chat systems. Interviews reinforce this by asking predictable questions.

The problem is not pattern knowledge. Interviews rarely test whether candidates can frame ambiguous problems before applying patterns.

In production, problem framing determines everything. A poorly framed problem leads to over-engineering or under-scaling. For example, teams often introduce Kafka prematurely when simpler queueing semantics would suffice, adding operational burden without clear benefit.

Strong engineers ask:

- What are the actual access patterns?

- What are the failure tolerances?

- What constraints matter most right now?

Interviews often skip this phase and jump straight to solutioning, rewarding pattern recall over critical thinking.

5. They fail to measure debugging and failure handling skills

Designing a system is only half the job. Keeping it running is the other half.

System design interviews rarely explore how candidates respond when things break. Yet in real environments, incidents define engineering maturity.

Consider Google’s SRE practices, where postmortems, error budgets, and observability are central to system reliability. The engineers who excel are those who can trace cascading failures, reason about partial outages, and implement mitigation under pressure.

A candidate who can design a distributed cache but cannot debug cache stampede behavior under load will struggle in production. Interviews often miss this entirely.

6. They assume greenfield conditions that rarely exist

Most interview questions start from zero: design a system from scratch. Most real systems are anything but greenfield.

You inherit:

- Legacy services with unclear ownership

- Inconsistent data models

- Partial observability

- Technical debt accumulated over the years

The ability to improve systems incrementally is far more valuable than designing a perfect system from scratch. Yet interviews almost never test this.

In one large-scale fintech platform migration, engineers spent more time building compatibility layers and dual-write strategies than designing new services. That kind of work is invisible in interviews but central to long-term success.

7. They measure individual performance, not system thinking at scale

System design interviews are individual exercises. Real systems are built by teams.

Senior engineers spend significant time:

- Influencing architectural decisions across teams

- Negotiating tradeoffs with product and business stakeholders

- Driving alignment on standards and interfaces

- Mentoring engineers through complex implementations

None of this shows up in a whiteboard session.

The ability to scale decision-making across an organization is often what differentiates staff-level engineers from strong seniors. Interviews that isolate candidates miss this entirely, selecting for individual contributors rather than system-level thinkers.

Final thoughts

System design interviews are not useless, but they are incomplete. They capture a narrow slice of engineering capability under artificial conditions. If you rely on them as your primary signal, you will systematically misjudge long-term performance.

The better approach is to complement them with signals that reflect reality: evolution over time, incident response, constraint navigation, and cross-team influence. Systems do not fail because someone chose the wrong database in a vacuum. They fail because complexity compounds. Your hiring process should reflect that.

A seasoned technology executive with a proven record of developing and executing innovative strategies to scale high-growth SaaS platforms and enterprise solutions. As a hands-on CTO and systems architect, he combines technical excellence with visionary leadership to drive organizational success.