You ship a new feature. Traffic spikes. One downstream service slows down, then times out. Threads pile up. CPU climbs. Suddenly, your healthy service becomes part of the outage.

If you have built distributed systems in the last decade, you have probably lived this story.

The circuit breaker pattern is a resilience design pattern that prevents cascading failures in distributed systems. It detects when a dependency is failing and temporarily stops sending requests to it, allowing the system to recover instead of amplifying the failure.

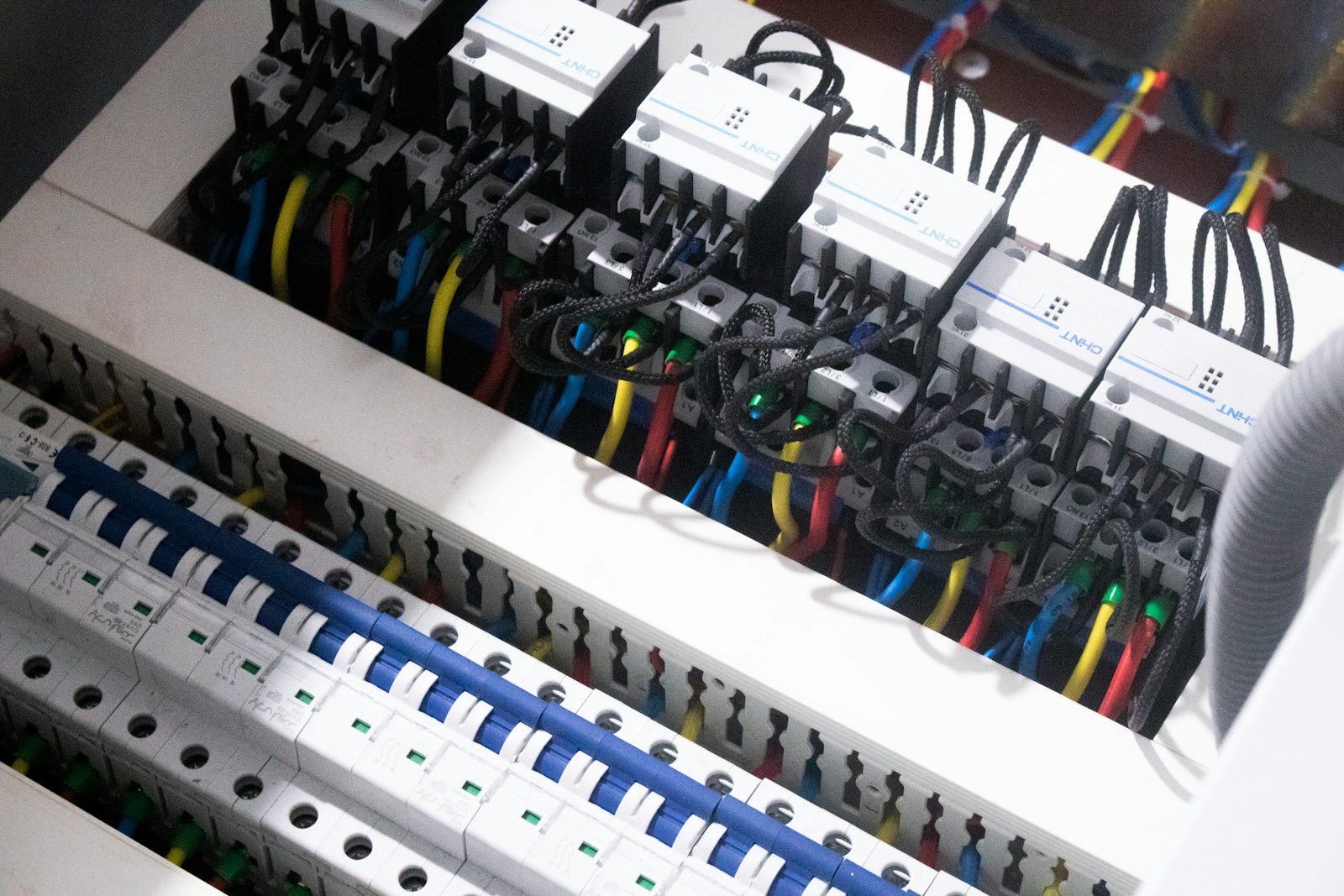

Think of it like the electrical circuit breaker in your house. When the current surges, it trips to prevent the wiring from melting. In software, when failures surge, the circuit breaker trips to prevent your system from collapsing under retry storms and blocked threads.

This pattern is not theoretical. It is one of the core building blocks behind Netflix’s microservices architecture, cloud-native frameworks like Spring Cloud and Resilience4j, and service meshes such as Istio. If you are operating APIs, microservices, or any system with remote dependencies, you are relying on it, whether you realize it or not.

What Experts Actually Say About Circuit Breakers

To ground this in real practice, we looked at how engineers who operate large-scale systems describe the pattern.

Martin Fowler, author and software architect, popularized the circuit breaker pattern in his writing on microservices. He explains that remote calls fail in ways local calls do not, and that without protection, latency and failure can spread across services. His core point is simple: you must detect repeated failures and fail fast rather than continue attempting work that is unlikely to succeed.

Hystrix engineers at Netflix described why they built the library in the first place. In large distributed systems, they observed that a single overloaded dependency could exhaust all available threads in upstream services. Their solution was to wrap calls in circuit breakers and bulkheads to contain blast radius.

Nygard’s “Release It!”, written by Michael Nygard, goes even deeper. He argues that stability patterns such as circuit breakers are not optimizations. They are survival mechanisms in production systems where partial failure is normal, not exceptional.

The synthesis across these perspectives is clear: failure is inevitable in distributed systems. The only question is whether your system fails gracefully or catastrophically.

The circuit breaker pattern is how you choose graceful.

The Real Problem: Partial Failure Is the Default

In a monolith running on one machine, a function call either works or throws an exception. In distributed systems, every remote call introduces new failure modes:

- Network timeouts

- DNS failures

- Slow downstream services

- Rate limiting

- Resource exhaustion

Now add retries. Many HTTP clients retry automatically. If a downstream service slows down, upstream services retry. Retries increase load. Increased load slows the service further. This feedback loop is called a retry storm.

Here is where systems collapse.

If each request to Service A triggers a call to Service B, and B starts timing out after 5 seconds, your threads in A are blocked for 5 seconds per request. With enough traffic, your thread pool saturates. Now, A cannot respond to any requests, even the ones that do not need B.

The failure spreads.

The circuit breaker pattern interrupts this feedback loop.

How the Circuit Breaker Pattern Works

At its core, a circuit breaker wraps calls to an external dependency and tracks their outcomes.

It typically operates in three states:

- Closed

Requests flow normally. The breaker monitors the failure rate. - Open

After a failure threshold is exceeded, the breaker “opens.” Calls fail immediately without attempting the remote request. - Half-Open

After a cooldown period, the breaker allows a limited number of test requests. If they succeed, the breaker closes. If they fail, it reopens.

You can model the decision logic simply:

If failure_rate > threshold over window:

state = OPEN

If state == OPEN and cooldown_elapsed:

state = HALF_OPEN

If state == HALF_OPEN:

if test_request_success:

state = CLOSED

else:

state = OPEN

This is deceptively simple. The power lies in how it changes system behavior under stress.

When open, the breaker fails fast. Instead of waiting 5 seconds for a timeout, your service returns an error in milliseconds. That preserves threads, CPU, and memory.

You have traded correctness for availability, but only temporarily.

That trade is often what keeps your system alive.

Why Systems Rely on Circuit Breakers

There are four reasons this pattern has become foundational in cloud-native systems.

1. It Prevents Cascading Failures

Cascading failures occur when one failing service drags others down with it. By failing fast, the circuit breaker isolates the problem.

This containment principle mirrors what SRE teams call blast radius reduction. You cannot prevent all failures, but you can stop them from spreading.

2. It Protects Resources

Thread pools, connection pools, and CPU cycles are finite.

If remote calls hang, they consume those resources. Once exhausted, your service becomes unresponsive even if it is otherwise healthy.

Circuit breakers protect your system’s internal capacity by refusing work that is unlikely to succeed.

3. It Encourages Fallback Design

When you implement a breaker, you usually implement a fallback.

Instead of calling a recommendation service, you return cached recommendations. Instead of live pricing, you return last known values. Instead of full functionality, you degrade gracefully.

This mindset forces teams to ask a critical question: What is the minimal useful response we can return?

That question often leads to more resilient architectures.

4. It Creates Observability Hooks

Circuit breakers expose metrics such as:

- Failure rate

- Slow call rate

- Open state duration

- Rejection count

These metrics are early warning signals. If your breaker is opening frequently, you have a dependency reliability problem.

The breaker becomes both a guardrail and a sensor.

How to Implement Circuit Breakers in Practice

You do not need to build this from scratch. Most ecosystems provide production-grade implementations.

Here is how to approach it methodically.

Step 1: Identify External Boundaries

Wrap calls that cross process boundaries:

- HTTP calls to other services

- Database connections

- Third-party APIs

- Message broker calls

If the call can fail independently of your process, it is a candidate.

Avoid wrapping trivial local calls. Overusing breakers adds complexity without value.

Step 2: Choose Sensible Thresholds

This is where most teams get it wrong.

Common parameters include:

- Failure rate threshold, for example, 50 percent

- Sliding window size, for example last 20 calls

- Open state duration, for example, 30 seconds

- Slow call duration threshold

You should base these on actual latency and error distributions, not guesses.

If your normal error rate is 2 percent, a 5 percent threshold may be reasonable. If you operate in a noisy environment, you may need more tolerance.

Measure first. Tune second.

Step 3: Implement Fallbacks That Make Sense

A circuit breaker without a fallback is just an aggressive error generator.

Consider:

- Cached responses

- Static defaults

- Feature flags to disable non-critical functionality

- Queueing requests for later processing

Be honest about trade-offs. Returning stale data might be acceptable. Returning incorrect financial transactions is not.

Step 4: Combine With Other Resilience Patterns

Circuit breakers are powerful but incomplete alone.

You should often combine them with:

- Timeouts to bound latency

- Retries with backoff to handle transient errors

- Bulkheads to isolate resource pools

- Rate limiting to protect downstream services

Netflix’s Hystrix famously combined circuit breakers with thread isolation. Modern libraries like Resilience4j support similar combinations.

Think of the breaker as one layer in a defense-in-depth strategy.

A Worked Example With Real Numbers

Assume:

- Service A receives 500 requests per second.

- Each request calls Service B.

- Service B latency jumps from 50 ms to 5 seconds.

Without a breaker:

- 500 requests per second × 5 seconds latency

- 2500 concurrent blocked requests

If your thread pool size is 200, you saturate in less than a second. After that, every request queues or fails.

With a breaker configured to open after 50 percent failures over 20 requests:

- First 20 calls experience timeouts.

- Breaker opens.

- Subsequent calls fail instantly.

Instead of blocking 200 threads for 5 seconds, you block 20 calls once. You preserve capacity for other endpoints.

That difference is the gap between degradation and outage.

Common Mistakes to Avoid

Even experienced teams misapply the pattern.

One mistake is setting thresholds too aggressively. A breaker that opens on minor noise creates instability rather than resilience.

Another is ignoring half-open tuning. If you allow too many test requests, you can overwhelm a recovering service.

A third is forgetting visibility. If you do not monitor breaker state transitions, you are flying blind.

Finally, do not use breakers to hide systemic design flaws. If a dependency fails constantly, the answer may be an architectural change, not better thresholds.

FAQ

Is a circuit breaker the same as retry logic?

No. Retries attempt the same request again. Circuit breakers stop attempts entirely after repeated failures. They are complementary but serve different purposes.

Do you still need circuit breakers in a service mesh?

Often yes. Service meshes like Istio provide out-of-process circuit breaking, but application-level context may still require custom fallbacks and domain-specific logic.

Can small systems skip this pattern?

If you have no remote dependencies, maybe. But the moment you call a database, an API, or another service, you are in distributed systems territory. Even small systems benefit from defensive boundaries.

Honest Takeaway

The circuit breaker pattern is not a trendy abstraction. It is a pragmatic response to a hard truth: distributed systems fail in unpredictable ways.

If your architecture depends on remote calls, and most modern systems do, you need a mechanism that detects failure, fails fast, and recovers intelligently.

The real work is not adding a library. It is thinking deeply about your failure modes, your fallback behavior, and your resource limits.

Do that well, and circuit breakers become invisible guardians.

Ignore it, and the next partial outage might become a full-blown incident.

Kirstie a technology news reporter at DevX. She reports on emerging technologies and startups waiting to skyrocket.