You can refactor code. You can swap frameworks. You can even migrate entire stacks over a long weekend if you are brave and caffeinated enough.

But if you get your database design wrong, you will feel it for years.

I have seen teams rewrite APIs three times while keeping the same flawed schema underneath. I have watched performance issues blamed on infrastructure when the real culprit was a data model decision made during a two-hour whiteboarding session before launch. Database design is not just a technical exercise. It is an architectural bet on how your system will evolve.

At its core, database design is the process of structuring data so your system can store, retrieve, and evolve information reliably. The problem is that three early decisions tend to lock in constraints that shape every future redesign, migration, and scaling effort.

Let’s talk about the three that matter most.

1. How You Model Your Core Entities and Relationships

This is the decision that feels the most obvious and yet causes the most pain.

When you define your core tables, documents, or collections, you are encoding your understanding of the business. What is a “user”? What is an “order”? Can an order exist without a user? Can a user belong to multiple organizations?

If you get this wrong, every future feature becomes a workaround.

We reviewed how experienced architects think about modeling tradeoffs. Martin Kleppmann, author of Designing Data-Intensive Applications, has repeatedly emphasized that data models are about shaping how the application evolves, not just how it stores data. He argues that flexibility and future queries matter more than theoretical elegance. In practice, that means modeling around real access patterns and expected change, not textbook normalization alone.

Similarly, Pat Helland, former Amazon and Microsoft engineer, has written extensively about entity boundaries and immutability. His core insight is that the way you define ownership and identity in your system dictates how easily you can scale and distribute it later.

Here is what seasoned teams consistently get right:

- They model for change, not just current features

- They treat IDs and relationships as long term contracts

- They document invariants and constraints explicitly

Why this decision is so sticky

Once production data exists, changing relationships is expensive. Splitting one table into three requires backfills, dual writes, migrations, and careful sequencing. Merging entities is even worse because it can invalidate assumptions across services.

I worked with a SaaS team that initially modeled “Organization” as a soft grouping around users. A year later, enterprise customers demanded strict tenant isolation, custom billing hierarchies, and delegated administration. The original schema had users owning most resources directly. Reversing that relationship required months of refactoring and a risky data migration across millions of rows.

The lesson is simple: your first entity boundaries become your future constraints.

2. Your Consistency and Transaction Model

The second decision is less visible but just as consequential: what guarantees does your database give you?

Are you using strong consistency with multi-row transactions, or are you embracing eventual consistency? Are you relying on foreign keys and constraints, or enforcing integrity in application code?

This decision shapes how you design workflows, error handling, and distributed systems.

We looked at guidance from practitioners operating at scale. Werner Vogels, CTO of Amazon, has long argued that availability often trumps strict consistency in large distributed systems. His position is pragmatic: in global systems, you cannot always have both without tradeoffs. Many high-scale systems choose eventual consistency deliberately.

On the other side, Peter Bailis, professor at Stanford and co-founder of Sisu Data, has published research showing how subtle consistency anomalies can create correctness bugs that are hard to detect. His work highlights that weaker guarantees require far more discipline at the application layer.

Here is the practical implication:

If you choose a database without strong transactions, you are signing up to implement invariants yourself. If you choose one with strong consistency, you may limit horizontal scalability or incur higher operational complexity.

A quick example with numbers

Imagine a payment system where each transaction debits one account and credits another.

With full ACID transactions:

- One transaction

- Two row updates

- Atomic commit

Failure rate: near-zero inconsistency, assuming correct use.

With eventual consistency and no cross-row transactions:

- Two independent updates

- Retry logic

- Compensating transactions

If even 0.01 percent of operations fail between steps, and you process 10 million transfers per month, that is 1,000 inconsistent states to reconcile.

You can build reconciliation systems. Many do. But that is a design tax you pay forever.

The key insight is that your consistency model determines how much complexity migrates from the database into your application and operations.

3. Your Strategy for Schema Evolution

The third decision is about time.

You will change your schema. That is guaranteed. The real question is how painful that process will be.

Some teams treat schemas as rigid contracts. Others treat them as living documents with versioning, feature flags, and backward compatibility strategies.

We examined how companies manage this at scale. Charity Majors, CTO of Honeycomb, often emphasizes the importance of designing systems that are easy to change under real production load. Observability aside, her broader message applies to schemas too: if change requires heroics, you have already lost.

In the database world, that translates to a few practical patterns:

- Backward compatible migrations first, destructive changes later

- Expand and contract strategy for renames and splits

- Versioned APIs decoupled from physical schemas

The expand and contract pattern in practice

Suppose you want to rename a column from full_name to display_name.

A naive approach:

- Rename the column

- Deploy new code

This will break any old application instance still referencing full_name.

A safer approach:

- Add

display_name - Backfill from

full_name - Deploy code that writes to both

- Migrate reads to

display_name - Drop

full_namelater

This feels slower, but it allows zero downtime evolution.

The deeper design choice is whether your system supports this gracefully. If your application tightly couples SQL queries to domain logic, even small schema changes become risky. If you abstract data access and treat migrations as first-class operations, evolution becomes routine.

Your schema evolution strategy determines whether redesigns are quarterly rituals or emergency projects.

How These Three Decisions Interact

These decisions do not exist in isolation.

Your entity modeling affects how hard it is to change schemas. Your consistency model affects how risky migrations are. Your evolution strategy determines how safely you can revisit your original modeling mistakes.

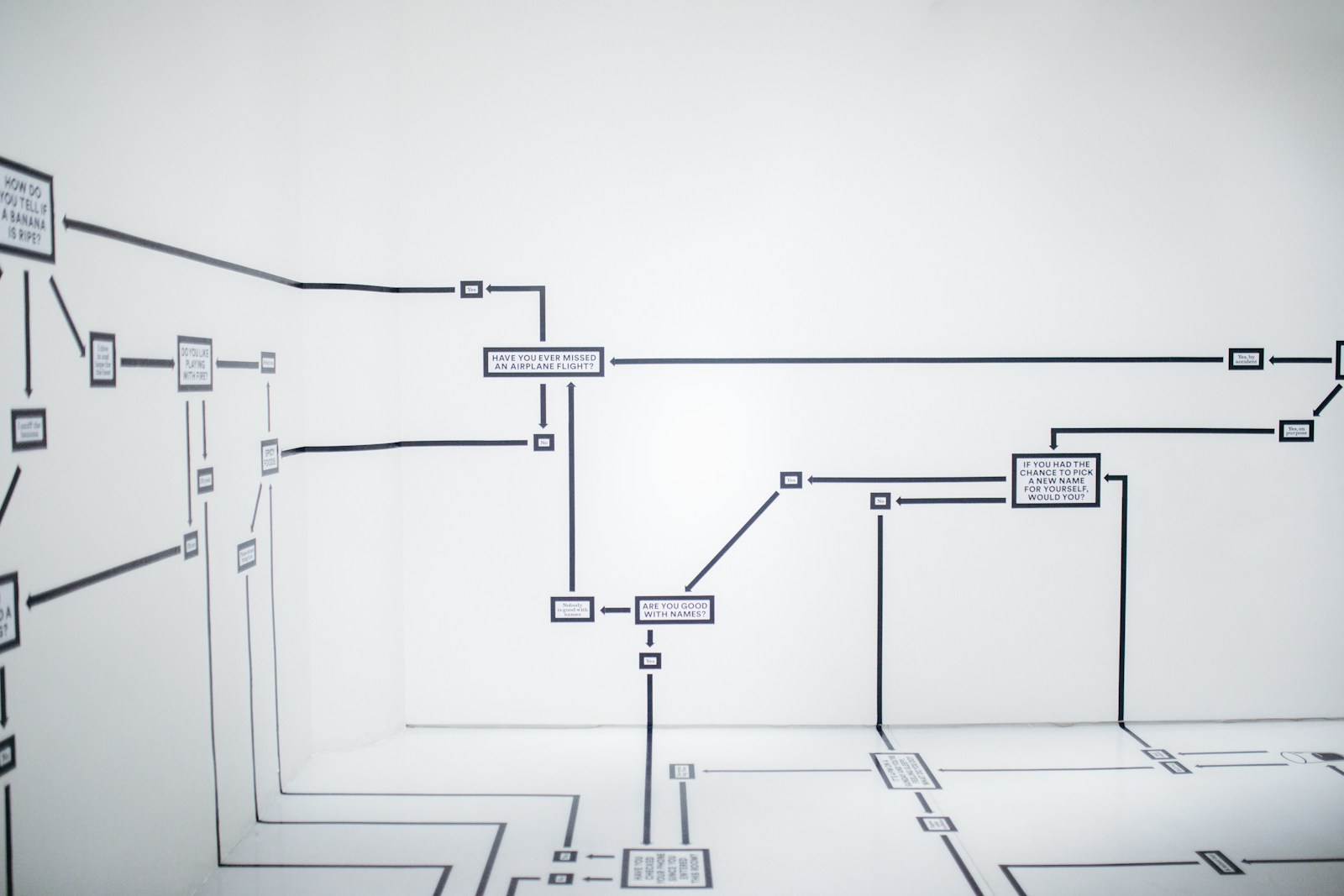

Think of them as a triangle:

- Entity boundaries define structure

- Consistency model defines guarantees

- Evolution strategy defines adaptability

If you optimize only one corner, you often pay in another.

For example, hyper-normalized schemas with strict constraints might maximize data integrity. But if your evolution strategy is weak, every structural change becomes a high-risk operation. Conversely, a schemaless design may evolve quickly early on, but without clear entity boundaries, long-term complexity accumulates invisibly.

There is no universal best choice. There are only tradeoffs that should be made deliberately.

How to Make These Decisions More Safely

If you are designing a new system or planning a redesign, here is a practical approach that has worked well in the field.

1. Model from real queries, not abstract nouns

List your top ten read and write patterns. Design tables or documents to serve those directly. If you cannot describe how a feature will query the data, you are modeling too abstractly.

2. Write down invariants explicitly

What must always be true? A user must belong to exactly one organization. An invoice must have at least one line item. If you cannot enforce it in the database, document how you enforce it in code.

3. Simulate one major pivot

Before launch, ask: what if we need multi tenancy? What if we need regional sharding? What if this entity becomes many-to-many instead of one-to-many? If your model collapses under a plausible scenario, rethink it early.

4. Practice a migration before you need one

Run a non-trivial schema migration in staging with production-like data volume. Measure how long it takes. Observe locking behavior. You will learn more in one rehearsal than in a dozen design meetings.

FAQ

Is normalization always the right choice?

Not always. Normalization reduces redundancy and enforces integrity, but can increase join complexity and reduce performance for certain read patterns. Many high-scale systems deliberately denormalize for performance and simplicity at the query layer.

Should you start with SQL or NoSQL?

The real question is not SQL versus NoSQL. It is what consistency guarantees, query flexibility, and operational complexity you are willing to manage. Modern SQL systems scale far beyond what most teams need. Start with the guarantees your business logic requires.

Can you redesign a bad schema later?

Yes, but it is rarely cheap. Data migrations, downtime risks, and cross-service coordination all compound over time. The earlier you correct structural mistakes, the cheaper they are.

Honest Takeaway

You cannot future-proof a database. You can only make conscious tradeoffs.

The three decisions that shape your system are how you model entities, what guarantees you rely on, and how you plan to evolve. Everything else is tuning and tooling.

If you slow down and treat those choices as long-term architectural commitments rather than implementation details, you will not eliminate redesigns. But you will turn them from existential crises into manageable projects.

Rashan is a seasoned technology journalist and visionary leader serving as the Editor-in-Chief of DevX.com, a leading online publication focused on software development, programming languages, and emerging technologies. With his deep expertise in the tech industry and her passion for empowering developers, Rashan has transformed DevX.com into a vibrant hub of knowledge and innovation. Reach out to Rashan at [email protected]