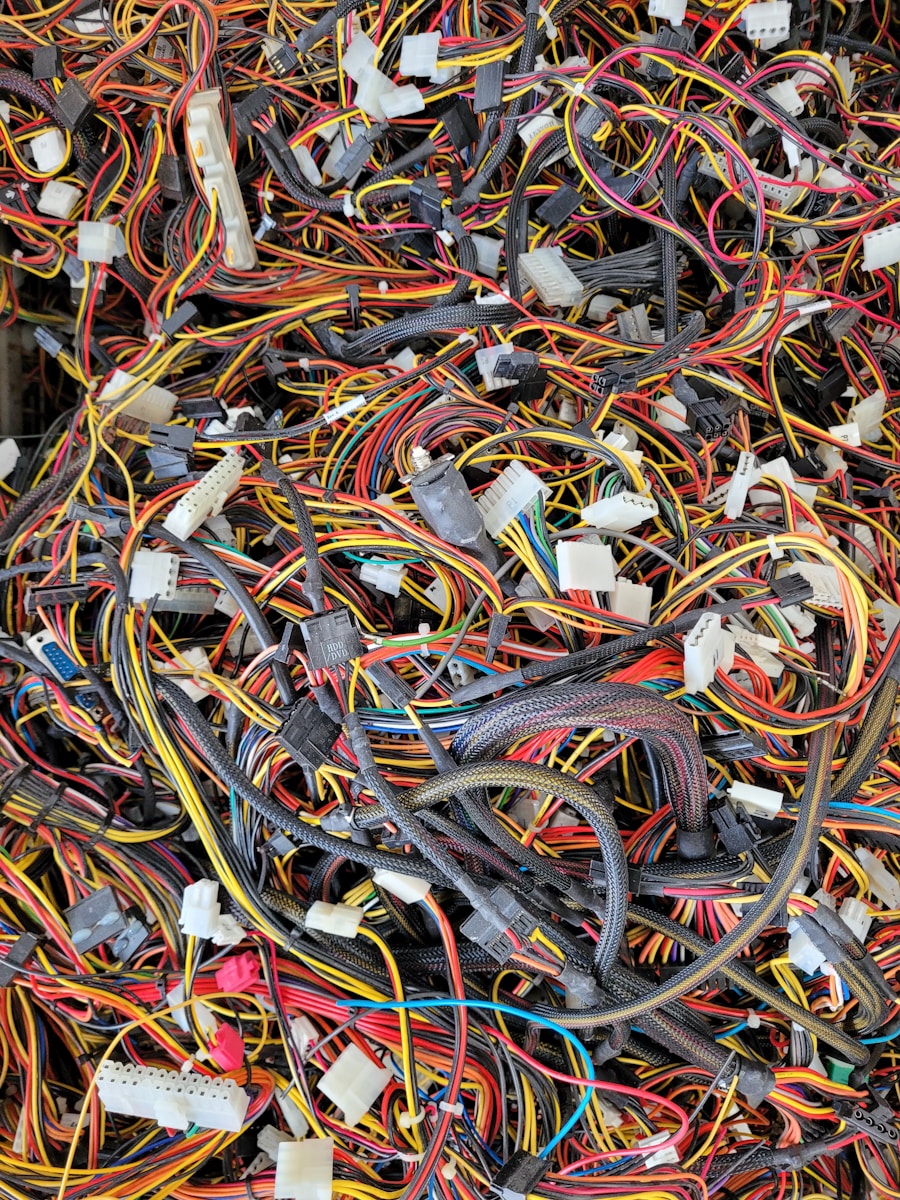

Most teams do not set out to build tightly coupled systems. They set out to move faster, reduce coordination overhead, and ship around constraints that feel temporary. A shared database gets rationalized as expedient. A platform team adds one more “helpful” abstraction. A service starts calling another service synchronously because the business logic already lives there. None of these decisions looks catastrophic in isolation. In production, though, they stack into a system where one team’s deploy window becomes another team’s outage risk, and where local optimization quietly turns into organizational drag. Hidden coupling is dangerous precisely because it rarely shows up in architecture diagrams. It shows up in incident timelines, delayed releases, migration pain, and teams that cannot change one thing without discovering five undocumented dependencies.

1. Shared data models start masquerading as shared understanding

One of the fastest ways teams create hidden coupling is by treating a single schema as proof of a single domain model. At first, this feels clean. Everyone reads from the same tables, uses the same event shape, and avoids duplication. In practice, you end up coupling teams to implementation details, naming decisions, and field semantics that were optimized for a different context. The problem is not only technical. It is cognitive. Once multiple teams depend on the same canonical object, changing that object becomes a negotiation exercise.

You see this most often in systems where “customer,” “order,” or “account” means something slightly different depending on the workflow. A payments team cares about reconciliation state. A growth team cares about segmentation. A support tool cares about operator visibility. Forcing those concerns into one shared model creates a false sense of alignment. Amazon’s two-pizza team model became powerful partly because service boundaries limited this kind of cross-team dependency. When your teams share data structures more than they share outcomes, you usually get fragile coordination instead of consistency.

2. Synchronous service calls creep into paths that should tolerate delay

A lot of hidden coupling starts as a reasonable shortcut: instead of re-implementing logic, one service just calls another. Then that call gets added to a request path with user-facing latency requirements. Then retries, timeouts, and fallback logic get bolted on after the first production incident. Before long, the availability profile of one service has silently become the availability profile of three.

This is where teams confuse reuse with good boundaries. Reusing behavior by making a synchronous dependency sounds efficient, but it often imports another team’s deployment cadence, rate limits, incident patterns, and backlog priorities into your own critical path. Netflix’s work on resilience patterns became influential for exactly this reason. Once systems are distributed, you do not get the simplicity of local function calls. You get partial failure, queue growth, timeout tuning, and cascading degradation. Not every synchronous dependency is wrong. Sometimes strong consistency really matters. But when a service cannot answer a basic request because a non-critical downstream feature is slow, coupling has already become an operational problem.

3. Platform abstractions become mandatory before they become trustworthy

Internal platforms are supposed to reduce repetition and standardize good defaults. They often do, especially when they simplify deployment, observability, identity, or policy enforcement. But teams accidentally create coupling when platform abstractions become unavoidable before they are stable, understandable, and escape-hatch friendly. That is when “golden paths” stop feeling like enablement and start acting like control planes for every engineering decision.

This usually happens when the platform encodes assumptions that fit one workload but not another. A team building event-driven batch pipelines gets the same templates as a low-latency API team. A data-heavy service is forced through a deployment model built for stateless web apps. Because the abstraction is centrally managed, every exception turns into process friction. The code still compiles. The coupling shows up in architecture review queues and blocked migrations. A healthy platform reduces accidental complexity without hiding essential complexity. Once teams have to understand the platform’s internals just to ship safely, the abstraction has become a new dependency surface.

4. Local ownership ends at the repo, but dependencies live in runtime behavior

Teams often believe they are decoupled because they own separate repositories, separate pipelines, and separate on-call rotations. That is structural independence, not necessarily runtime independence. Hidden coupling appears when service behavior depends on undocumented assumptions about request volume, cache warmth, retry timing, or deployment order. None of that is visible in a repo boundary.

You can see this during releases that look safe in code review but fail in production because a downstream service assumes an old field order, an old rate pattern, or a particular bootstrapping sequence. Google’s SRE practices pushed the industry to think in terms of error budgets and service behavior precisely because architecture is not just code organization. It is how systems behave under stress. If two teams cannot independently deploy because warm-up behavior, schema drift, or traffic spikes create instability, they are coupled whether or not the org chart says otherwise.

A simple heuristic helps here:

| Looks decoupled on paper | Actually coupled in production |

|---|---|

| Separate repos | Shared runtime assumptions |

| Independent deploy pipelines | Ordered deploy requirements |

| Distinct service ownership | Shared failure domains |

5. Observability gets designed per team instead of per dependency chain

Another common source of hidden coupling is fragmented observability. Every team has logs, metrics, and dashboards for its own service, but no one has visibility into the full dependency path that a user request or business workflow actually follows. That fragmentation hides coupling until an incident forces everyone into the same war room.

The issue is not lack of tooling. Most teams already have tracing, log aggregation, and metrics pipelines. The problem is that instrumentation often mirrors team boundaries instead of execution paths. When trace context breaks between services, when alert thresholds are tuned locally, or when SLIs optimize for component health rather than end-to-end behavior, teams lose sight of how tightly their systems depend on each other. I have seen organizations where each service reported healthy p95 latency while the user workflow still failed because five “acceptable” latencies compounded into a terrible experience. That is a coupling problem disguised as a monitoring problem.

6. Domain decisions get centralized, but implementation consequences stay distributed

Teams also create hidden coupling when decision authority about a domain is centralized, while implementation remains spread across multiple services and teams. On paper, this gives consistency. In reality, it often produces a model where one team makes semantic changes and several other teams absorb the migration cost. The owning team may not even see the blast radius because the downstream consequences surface weeks later in data quality issues, brittle integrations, or delayed feature work.

This shows up in identity, pricing, entitlements, and customer state more than anywhere else. These domains touch many workflows, so teams naturally try to centralize them. The trap is assuming that central ownership removes the need for interface discipline. It does not. If the contract between teams is vague, every “small” change becomes distributed rework. The early microservices wave at many enterprises exposed this pattern hard: services were split for ownership reasons, but domains were still so cross-cutting that organizations recreated monolith-style dependencies over the network. Splitting code without reducing semantic dependency just moves the pain.

7. Delivery pressure rewards short paths, even when they create a long-term coordination tax

The deepest cause of hidden coupling is not architecture. It is incentives. Teams under delivery pressure choose the shortest path to shipping, and the shortest path often borrows another team’s system, model, or workflow instead of creating a clean boundary. That choice is rational in the moment. It only becomes expensive later, when every roadmap item now requires cross-team sequencing and every incident reveals a dependency nobody budgeted for.

This is why hidden coupling survives even in organizations full of experienced engineers. People see the tradeoff. They just do not always pay the cost themselves or within the same quarter. A shared queue avoids duplicate ingestion logic. A direct database read skips an API backlog. A synchronous call removes the need for eventual consistency design. Each decision buys speed now and coordination debt later. Mature teams do not eliminate all coupling. That is unrealistic. They make it explicit, observable, and governable. The real discipline is not purity. It refuses to smuggle system dependencies into places where nobody has agreed to own them.

Hidden coupling rarely arrives through one bad architectural choice. It accumulates through useful shortcuts, ambiguous ownership, and incentives that reward local progress over system clarity. The fix is not to chase perfect autonomy. It is to make dependencies legible, test them under real conditions, and treat cross-team coordination cost as a first-class engineering signal. Once you can see the coupling clearly, you can decide which parts are worth keeping and which are quietly slowing the whole organization down.

Rashan is a seasoned technology journalist and visionary leader serving as the Editor-in-Chief of DevX.com, a leading online publication focused on software development, programming languages, and emerging technologies. With his deep expertise in the tech industry and her passion for empowering developers, Rashan has transformed DevX.com into a vibrant hub of knowledge and innovation. Reach out to Rashan at [email protected]