I’ve been watching the evolution of computer chips for years, and what’s happening now feels like a genuine turning point. For half a century, our digital world has relied on traditional chips that compute in perfect rhythm, following a central clock. But our brains work completely differently—chaotic, unpredictable, and remarkably efficient.

Now, a tiny Dutch company called Innatera has created something extraordinary: the Pulsar chip. At just 3mm wide—small enough to balance on your fingertip—this neuromorphic processor mimics how our brains function. The results are stunning: it’s reportedly 100 times faster than conventional chips while using 500 times less energy.

The contrast between our current technology and our own biology is stark. Consider this: NVIDIA’s latest GPU consumes roughly 1,000 watts of power. Your brain? Just 20 watts—less than a dim light bulb—yet it matches the computing power of Apple’s latest chip with its 28 billion transistors.

Or think about an owl, whose brain runs on less than 1 watt. That tiny power budget allows it to fly silently, spot a mouse in near-darkness, calculate the perfect attack angle, and catch it—all in real time. Trying to replicate this with traditional computing would require multiple chips, numerous sensors, hundreds of watts, and would still likely fail.

How Brain-Like Computing Works

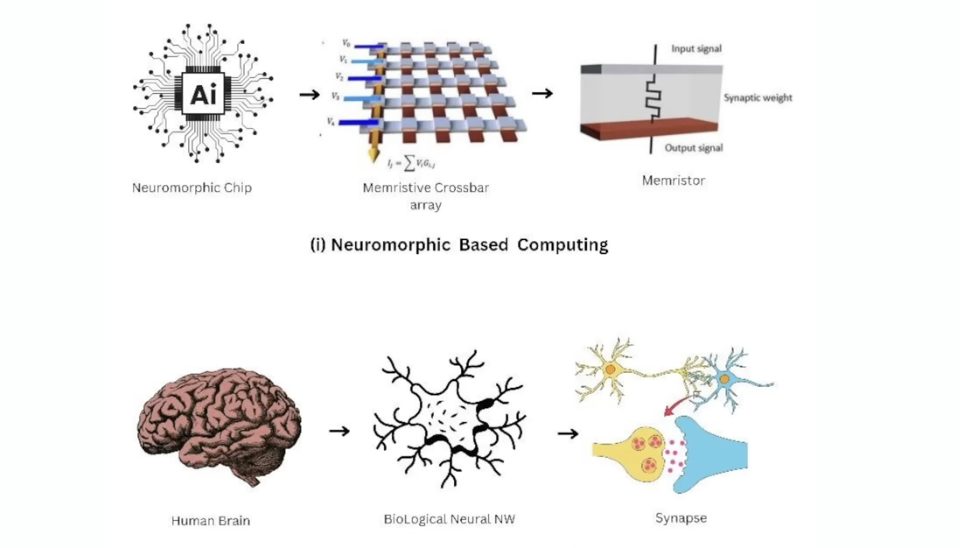

What makes neuromorphic computing so revolutionary is how it fundamentally reimagines chip architecture. Traditional CPUs rely on billions of transistors switching on and off in perfect synchronization, governed by that power-hungry central clock. The Apple chip, for instance, operates at up to 4 GHz—switching 4 billion times per second.

Our brains prove we don’t need this rigid approach. The 86 billion neurons in your head fire only about 40 times per second and don’t wait for central instructions. Each neuron simply responds to electrical spikes from neighboring neurons. It’s chaotic up close but creates something remarkable when viewed as a whole.

The Pulsar chip achieves this brain-like function through two different “brains” working together:

- An analog, spiking component with 1,000 neurons that mimics brain behavior

- A digital component built to run Convolutional Neural Networks for pattern recognition

While 1,000 neurons is tiny compared to our 86 billion, it’s enough to process data from sensors like cameras. The chip uses Spiking Neural Networks (SNNs) that stay quiet most of the time and only react when events happen—that’s where the energy savings come from.

Real-World Applications

The implications are profound. Imagine phones that don’t need charging for weeks or laptops lasting a full week on one charge. Picture AI running almost anywhere without draining power.

Innatera aims to integrate their chip into billions of devices and sensors, enabling cameras, microphones, and other devices to see, hear, and think like our brains—all while processing data locally without sending anything to the cloud.

The timing couldn’t be better. We’re entering an era where sensors will be everywhere—in buildings, factories, robots, and even clothing. To make sense of all this data, we need tiny, efficient chips exactly like Pulsar.

Challenges Ahead

Despite its promise, neuromorphic computing faces significant hurdles. Scaling presents a major challenge—while 1,000 neurons is impressive, it’s nowhere near what’s needed for true cognition. As you add more neurons, “parasitics” (unwanted electrical effects) become more problematic, making larger networks less efficient and precise.

The market dynamics also present challenges. The Pulsar chip resembles a microcontroller with added brain-like features, but the microcontroller market is highly competitive and cost-sensitive. Even the smartest chip can fail if no one is willing to pay for it.

Software remains another obstacle. Programming neuromorphic chips requires specialized skills, and unlike traditional computing with decades of software development behind it, these brain-like chips are starting almost from scratch.

In the near term, these chips won’t replace high-performance computing or compete with NVIDIA GPUs. Instead, they’ll excel at specific tasks like object detection or event recognition.

The world needs faster, greener chips, and nothing inspires this future more than the human brain itself. Whether the Pulsar chip succeeds commercially or not, it represents an important step toward computing that works more like nature’s most sophisticated creation—our own minds.

Frequently Asked Questions

Q: What makes neuromorphic computing different from traditional computing?

Neuromorphic computing mimics how the brain works—operating without a central clock, processing information through spikes and events rather than continuous signals, and combining memory and computation in the same place. This approach allows for much greater energy efficiency while handling complex tasks.

Q: How much more efficient is the Pulsar chip compared to conventional chips?

According to Innatera, the Pulsar chip is approximately 100 times faster than conventional chips while consuming 500 times less energy. This dramatic efficiency gain comes from its brain-inspired architecture that only activates when needed rather than constantly running calculations.

Q: Can these brain-like chips replace GPUs for AI applications?

In the near term, neuromorphic chips like Pulsar won’t replace high-performance GPUs for training large AI models. Instead, they’re better suited for edge computing applications where energy efficiency is critical—such as in sensors, IoT devices, and robotics that need to process data locally without sending it to the cloud.

Q: What are the main obstacles to widespread adoption of neuromorphic computing?

The main challenges include scaling limitations due to electrical parasitics when adding more neurons, market competition from established microcontroller manufacturers, and software development hurdles. Programming these chips requires specialized knowledge, and the ecosystem of tools and frameworks is still in its early stages.

Q: Where might we see these chips first appear in everyday life?

The first applications will likely be in smart sensors, IoT devices, and robotics where power efficiency is crucial. Think of security cameras that can detect specific events without constant recording, hearing aids that can filter specific sounds, or wearable health monitors that can analyze data without draining batteries.