The rapid advancement of artificial intelligence (AI) is transforming the landscape of data centers, with global spending projected to surpass $1 trillion by 2029. As AI workloads become more prevalent, data centers are increasing their investments in servers, power, and cooling infrastructure to meet the growing demand. According to Dell’Oro analyst Baron Fung, enterprises allocate about 35% of their data center capital expenditure (CapEx) budgets to accelerated servers, a significant increase from 15% in 2023.

This proportion is expected to reach 41% by 2029. Hyperscalers, such as Amazon, Google, Meta, and Microsoft, are investing even more heavily in AI infrastructure, with accelerated servers accounting for 40% of their budgets. These servers can cost between $100,000 and $200,000, especially when equipped with the latest Nvidia CPUs.

As a result, these tech giants are expected to account for nearly half of the global data center CapEx this year. Fung predicts that most AI workloads will initially be hosted in the public cloud due to the high cost and potentially low utilization of AI infrastructure in private data centers. However, as enterprises better understand AI workload utilization, they may opt to bring some workloads back on-premises.

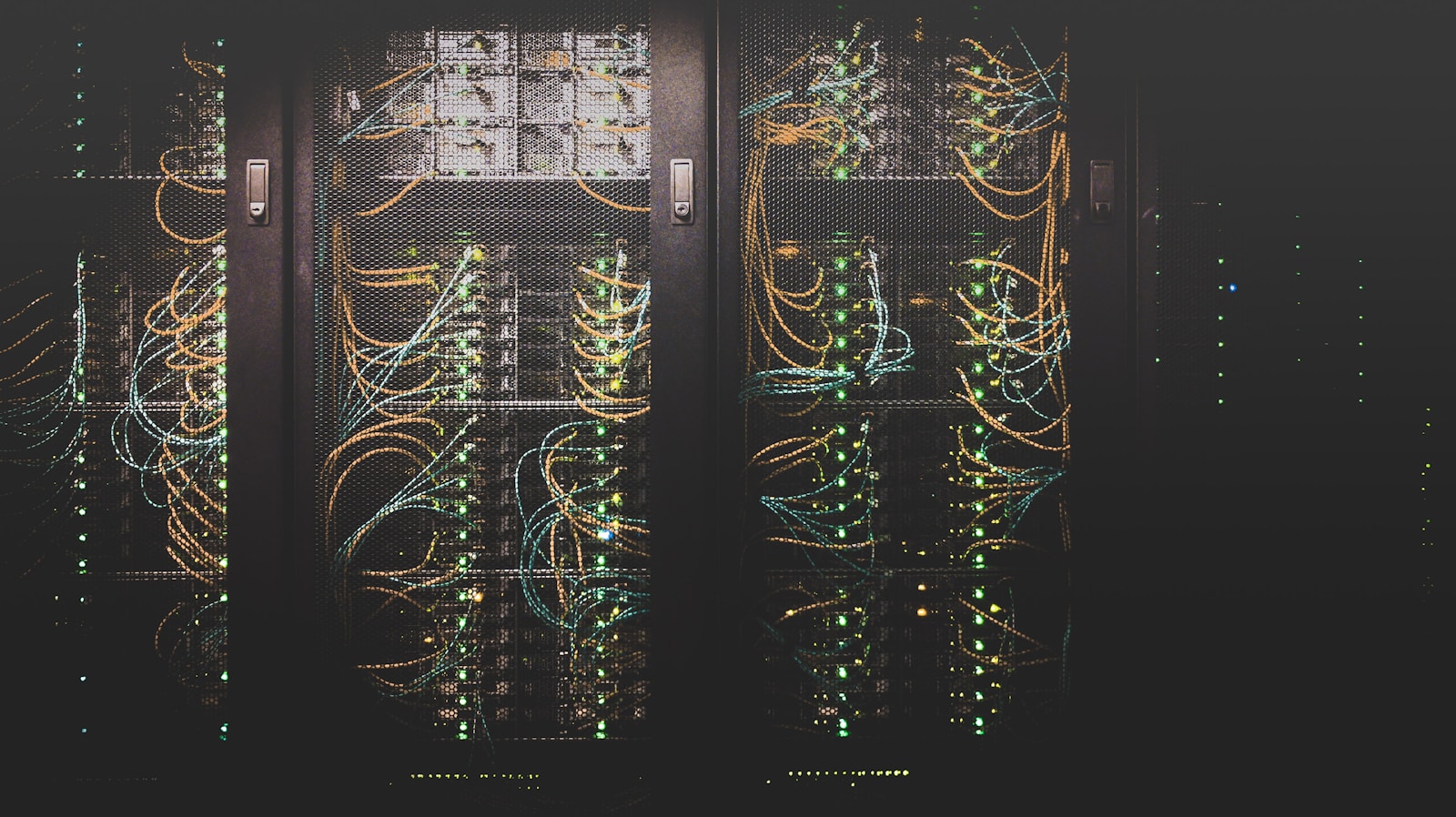

Deploying dedicated AI servers significantly impacts networking, power, and cooling infrastructure. Spending on data center physical infrastructure (DCPI) is projected to grow at a more moderate pace of 14% annually, reaching $61 billion by 2029.

AI workloads transform data center infrastructure

DCPI deployments are considered essential for supporting AI workloads. AI workloads are particularly power-intensive, requiring 60 to 120 kilowatts per rack, compared to the current average of 15 kilowatts per rack. According to McKinsey, average power densities in data centers have more than doubled in the past two years and are expected to reach 30 kilowatts per rack by 2027.

Data center operators are increasingly adopting liquid cooling to address the limitations of air-cooling systems, which have an upper limit of effectiveness of around 50 kilowatts per rack. An industry report released in September revealed that half of the organizations with high-density racks now use liquid cooling as their primary cooling method. Overall, 22% of data centers use liquid cooling, with another 61% considering its implementation.

38% of large data centers are already using direct liquid cooling. Lucas Beran, director of product marketing at Accelsius, a liquid cooling company, states, “This year, liquid cooling is going to be all about scaling. We understand what we want to do and how to do it well, and we will start deploying the infrastructure at scale.

Confidence will grow in these liquid cooling deployments, which will help accelerate industry adoption.”

As AI drives unprecedented changes in data centers, the industry must adapt its infrastructure to support the growing demands for power, cooling, and networking. The adoption of liquid cooling and the development of more efficient AI models and custom chips will play a crucial role in enabling data centers to keep pace with the rapid evolution of AI technology.

Image Credits: Photo by Taylor Vick on Unsplash

Rashan is a seasoned technology journalist and visionary leader serving as the Editor-in-Chief of DevX.com, a leading online publication focused on software development, programming languages, and emerging technologies. With his deep expertise in the tech industry and her passion for empowering developers, Rashan has transformed DevX.com into a vibrant hub of knowledge and innovation. Reach out to Rashan at [email protected]