Evaluating Large Language Models (LLMs) is critical for developing dependable and efficient AI technologies. As these models progress, we must assure their accuracy, fairness, and security. Without appropriate LLM assessment tools, we risk developing systems that disseminate disinformation, encourage prejudices, or fail in real-world scenarios.

When we discuss assessment tools, we are referring to technologies that can:

- Measure accuracy to verify that the model generates consistent results.

- Detect biases to avoid unfair or damaging outcomes.

- Analyze performance across several tasks and datasets.

This essay will look at the leading tools for LLM assessment in 2025.

Why is LLM Evaluation Important?

When working with AI language models, we must evaluate their performance.

I prefer to think of it as quality control, similar to how you would test a car before driving.

We need to ensure that these models are performing as expected.

Here are some practical reasons why LLMs matter:

- You don’t want an AI offering incorrect responses while assisting doctors in making medical judgments.

- We need to examine if the model is accurate and not make up misleading facts that may mislead users.

- It identifies potential biases, such as favoring some groups over others.

- Before utilizing AI for critical jobs, it’s important to ensure it understands complicated instructions.

By testing LLMs properly, we can trust them more and use them better in our work.

Criteria for Evaluating LLM Evaluation Tools

When selecting the correct tool to analyze Large Language Models (LLMs), we must consider a few essential elements. These factors help us determine how effectively a tool satisfies our requirements and produces consistent outcomes.

Here’s what you should look for:

- Accuracy and Performance: Does the tool measure how well the model generates correct and useful outputs?

- Bias Detection: Can it identify unfair patterns or discriminatory results in the model’s responses?

- Scalability: Will it work smoothly with large datasets and complex models without slowing down?

These factors guide us in selecting tools that truly deliver value.

Our Top 5 Picks for the Best LLM Evaluation Tools

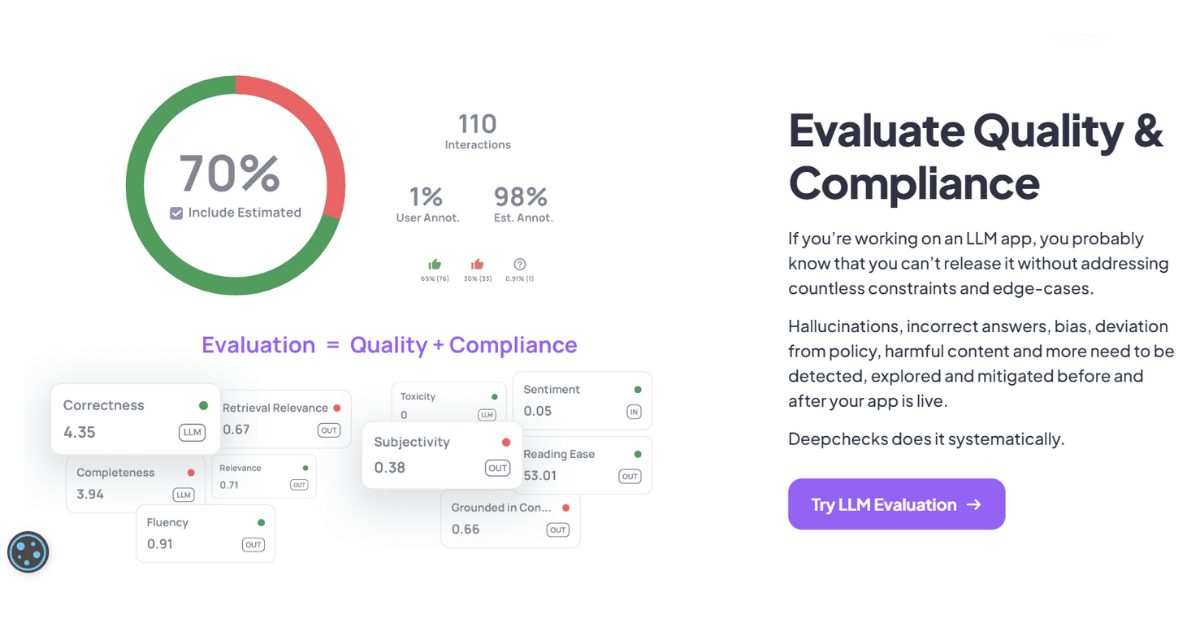

1. DeepChecks

If you want to check how well your AI language models are performing, DeepChecks can help. While it may take some work to set up, it provides clear visuals of how your model is performing.

Here’s what you can do with it:

- You may detect issues early by observing when your data appears strange.

- You can watch if your customer service chatbot stays helpful over time

- You may examine whether your model treats everyone equitably.

- You can identify problems as they arise, rather than after they have occurred.

The tool focuses on testing the models themselves, making it different from other options.

2. TruLens

If you want to know why your AI model makes particular decisions, TruLens might be the answer. I like how it tells you how your model thinks rather than just what it outputs.

Here’s what you can do with it.

- You may get graphic breakdowns of how your model made its judgments.

- You may assess if your model is unfair to specific groups.

- You may enhance how you communicate with your model by addressing confusing instructions.

- You can acquire trust scores that show how dependable your results are.

This tool is handy if we’re working in fields like healthcare or finance, where we need to explain AI decisions.

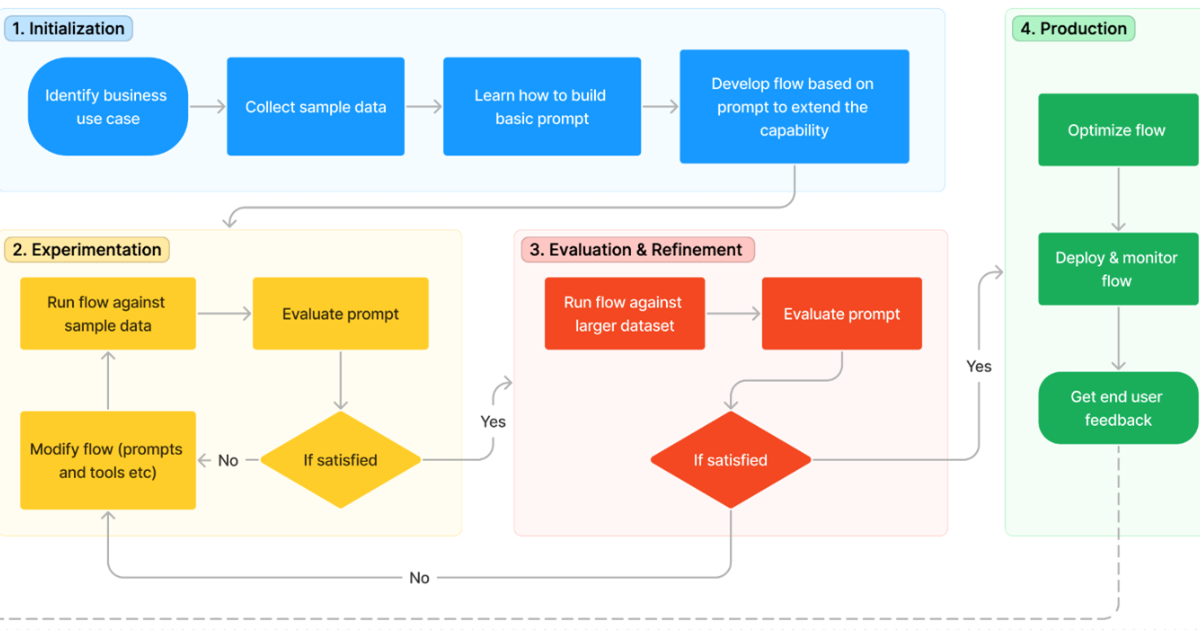

3. Prompt Flow

If you’re working with AI models and want to try out new ways of communicating with them, Microsoft’s PromptFlow can assist. I enjoy how it displays a visual map of your AI talks, which makes it easy to comprehend what’s going.

Here’s what you can do with it.

- You can create and test various conversation flows without writing sophisticated code.

- You can monitor the effectiveness of your various tactics over time.

- You can manage intricate back-and-forth talks with your AI.

- You can rapidly scale up when your project develops larger.

It performs very well if you currently utilize Microsoft Azure services for your applications.

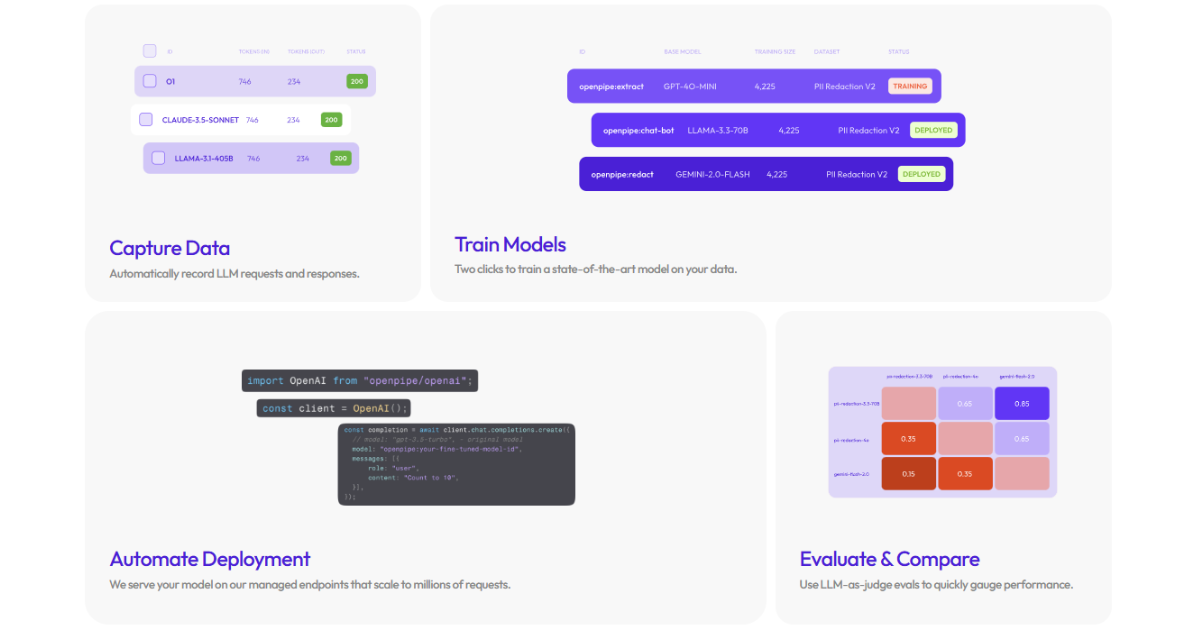

4. OpenPipe

If you’re developing AI apps and want to try alternative approaches to obtain better results, OpenPipe can assist. It is useful since it allows you to examine various ways side by side to see which works best.

Here’s what you can do with it.

- You may test alternative ways of asking your AI model questions and discover which one yields better results.

- You can see how well your AI is functioning with simple graphs.

- You can see if your AI responds quickly enough to your needs.

- You can track expenditures to ensure you don’t overspend.

It’s useful if you’re developing AI technologies that need to communicate via APIs.

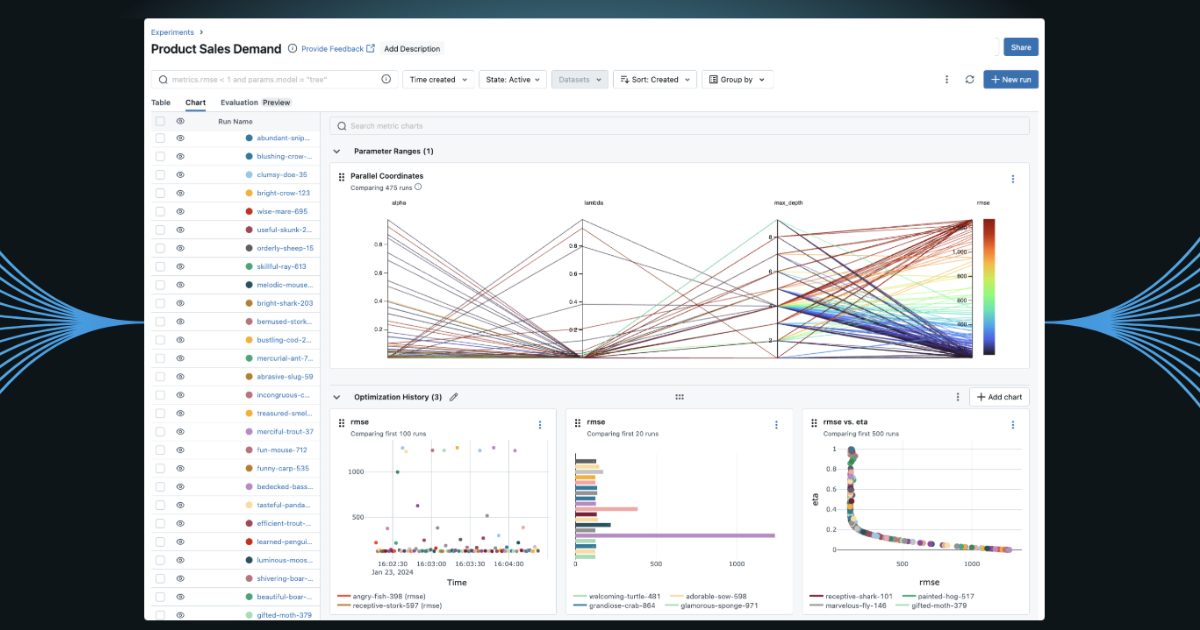

5. MLFlow

If you want a straightforward approach to test your AI models, MLFlow is simple and adaptable. Conveniently, it may be used with several forms of AI, not simply language models.

Here’s what you can do with it.

- You can see how your model develops over time as you make modifications.

- You may compare different variations of your model and determine which works best.

- You may test how well your AI responds to queries and discovers information.

- You can track all of your experiments in one spot.

It’s beneficial if you’re dealing with many AI models and want to keep everything organized.

Final Thoughts

Looking at the top LLM evaluation tools for 2025, one thing is clear: the industry is transitioning to more extensive testing methodologies.

Each tool offers something unique: DeepChecks specializes in early problem discovery, TruLens focuses on choice transparency, and Prompt Flow makes conversation testing easier.

What matters most is selecting a tool that meets your individual requirements. Whether you require bias detection, performance monitoring, or API optimization, a solution is now accessible.

As AI continues to influence key industries such as healthcare and finance, these assessment techniques are no longer optional; they are required for the development of trustworthy AI systems.

Featured Image Source: Pexels

Rashan is a seasoned technology journalist and visionary leader serving as the Editor-in-Chief of DevX.com, a leading online publication focused on software development, programming languages, and emerging technologies. With his deep expertise in the tech industry and her passion for empowering developers, Rashan has transformed DevX.com into a vibrant hub of knowledge and innovation. Reach out to Rashan at [email protected]