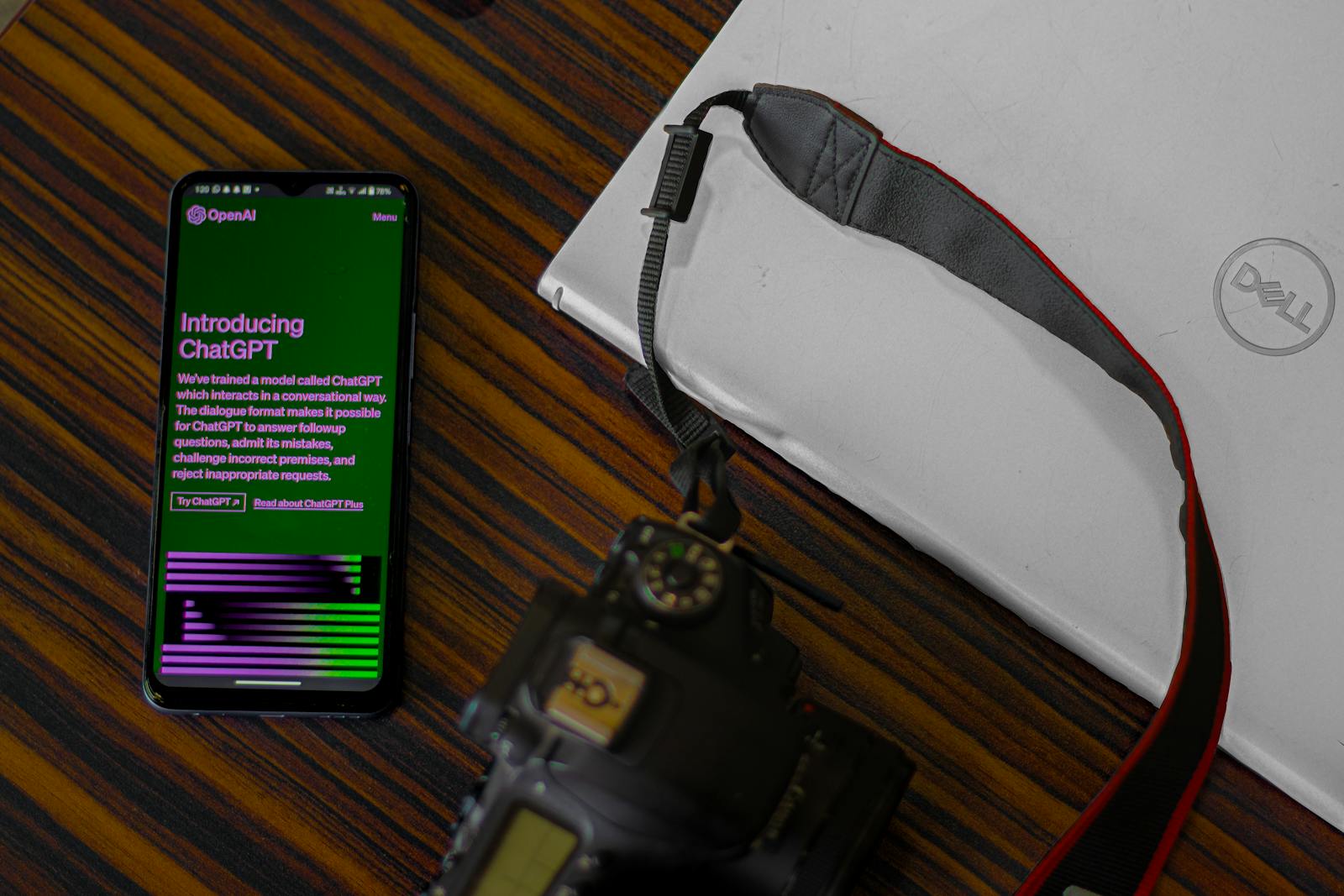

The rise of AI-generated content has sparked a debate across industries. While tools like ChatGPT and MidJourney have revolutionized creativity, their mass adoption has led to a flood of low-quality, automated material on the internet. This “slop” poses a challenge to online spaces, where content quality influences user trust and engagement.

AI-generated slop refers to low-effort content produced with AI that lacks depth, originality, or context. Examples include spammy blog posts, art with mismatched details, and social media posts with nonsensical visuals. The term underscores the digital clutter this content creates.

Generative AI tools are easy to use and accessible to anyone. Financial incentives also drive the creation of AI content, as creators can earn money through monetized websites and social media engagement. Additionally, platforms and search engines prioritize engagement, which can favor eye-catching AI visuals or provocative AI-written posts.

The impact of AI-generated slop is significant. It erodes trust, as audiences may lose faith in platforms that fail to ensure quality. Inaccurate AI content can amplify misinformation, creating confusion on critical issues.

Addressing AI-generated content slop

Human creators face unfair competition from mass-produced AI content, leading to burnout. The user experience also declines as low-value content clutters search results.

Solving this problem requires enhanced moderation tools, regulatory frameworks, user education, and ethical AI practices. Platforms need advanced AI detection systems, while governments can mandate transparency and penalize unchecked AI spam. Teaching users to recognize AI content empowers them to make informed decisions.

Real-world examples highlight the impact of AI slop. In 2024, a major blogging platform reported that 25% of new articles were AI-generated, leading to stricter moderation. An AI-generated “Shrimp Jesus” image went viral, showing how absurd AI visuals can gain widespread attention.

To identify AI content, look for repetitive phrases, unnatural language, inconsistent visuals, and lack of depth. While not all AI content is bad, misuse and over-reliance on automation without oversight are problematic. Businesses relying solely on AI risk losing credibility if the material lacks quality.

Ensuring that AI enriches the digital world requires responsible actions from platforms, creators, and users alike. The future of the internet depends on addressing the rise of AI-generated slop.

April Isaacs is a news contributor for DevX.com She is long-term, self-proclaimed nerd. She loves all things tech and computers and still has her first Dreamcast system. It is lovingly named Joni, after Joni Mitchell.