You have probably seen the same codebase described with two very different labels. One team calls it “legacy” and whispers about rewrites. Another calls something just as old “battle tested” and treats it like a strategic asset. Age is the same. Technology stack is similar. What changed is how the system evolved, how it is operated, and how much organizational scar tissue is embedded in it. This distinction matters, because you cannot lead at scale if every old system is automatically treated as a problem to escape rather than an asset to invest in.

Here is the real gap between legacy code and battle tested systems: it is not years, language choice, or how many original authors are still around. It is whether the system has grown through incidents, requirements, and organizations while keeping its feedback loops, safety margins, and domain knowledge intact.

1. Legacy is trapped in its origin story; battle tested keeps absorbing new realities

Legacy code usually still thinks it is living in the company that existed when it was written. You see hard coded assumptions about traffic patterns, feature flags that became permanent logic, and “temporary” data models for a product experiment from 2015 that quietly turned into the core revenue stream. The architecture may have been sound for that origin story, but the world around it changed and the code never really caught up.

A battle tested system is just as old but its mental model has been updated repeatedly. Interfaces were refactored when the business model shifted, read paths were optimized when latency budgets tightened, and failure handling changed as dependencies moved to the cloud. The code tells the story of the current business, not the launch era pitch deck. The practical test is simple: when new requirements arrive, does the system feel like it is swimming with the current or against it.

2. Legacy hides intent; battle tested systems encode domain decisions

If you cannot answer “why is it like this” without calling three alumni, you are dealing with legacy. Intent is buried in Slack screenshots, half-migrated wiki spaces, and one surviving design doc that covers 20 percent of the behavior. You can reverse engineer the “what” of the system, but understanding the “why” feels like archeology. That is where teams start treating code as untouchable and introduce new systems around it rather than through it.

Battle tested systems are old, but you can still discover intent in the artifacts. The domain model is cohesive enough that a new engineer can infer invariants from types and boundaries. Critical flows are mapped in runbooks. Architectural decisions are encoded in code comments, ADRs, or schema evolution history. When a payments team I worked with formalized their anti-fraud rules as explicit policy objects instead of scattered conditionals, incident debugging shifted from “guess the logic” to “inspect the policies”. The code stayed old, but the intent became visible again.

3. Legacy resists change; battle tested is designed for safe evolution

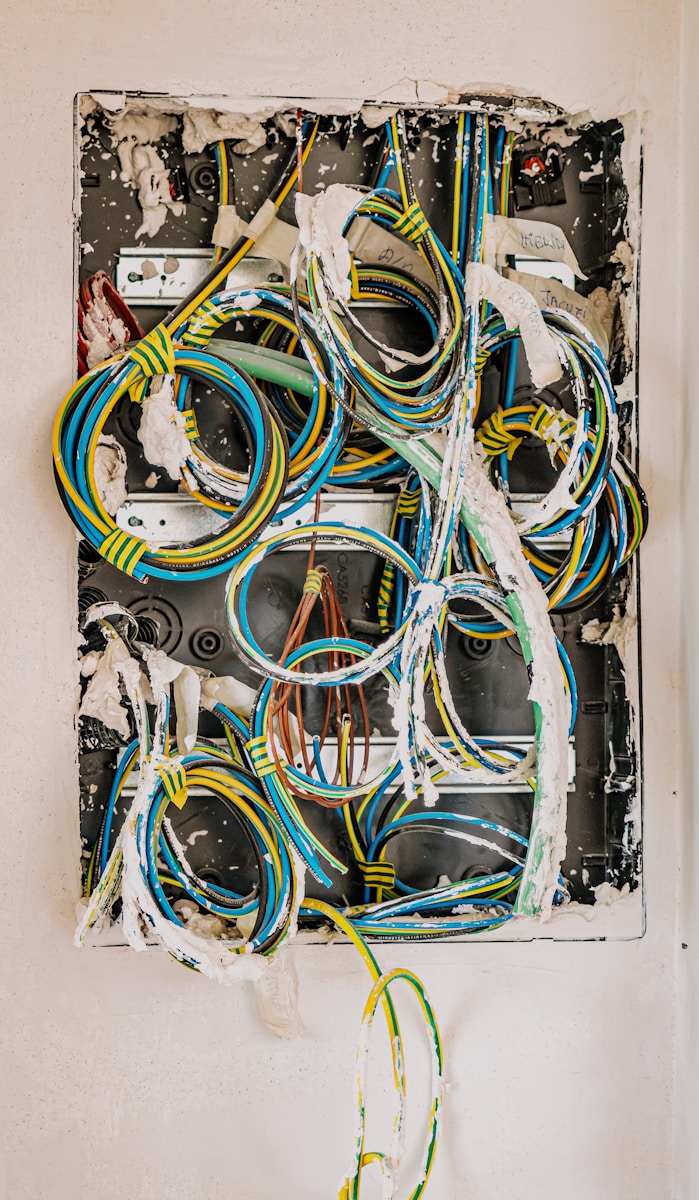

In legacy systems, even trivial changes feel like surgery. There are no seams to inject behavior. Types are anemic, side effects are everywhere, and every test run feels like a roulette wheel. The deployment story often tells you more than the code: long lived branches, manual checklists, “change windows”, and a culture of change avoidance. The system has effectively become change hostile, which is exactly what you do not want for something critical.

Battle tested systems still have sharp edges, but they are built to accommodate change under fire. You see patterns like:

-

Small, composable services around a stable core

-

Feature flags that are first class, not bolted on

-

Contract testing between critical producers and consumers

When one high traffic SaaS platform split their monolith into a “change friendly” edge layer and a “change conservative” core, they cut deploy lead time from weekly to hourly for 80 percent of changes, while keeping the core database schema relatively stable. The architecture made change safety explicit instead of accidental.

4. Legacy depends on heroes; battle tested depends on observable signals

If the primary mitigation mechanism for incidents is “page that one person who knows the system”, you are in legacy territory. Key knowledge is in heads, log formats are an oral tradition, and every outage review ends with “we should improve observability” but no one owns it. Mean time to recovery is dominated by “figuring out what is actually happening” rather than applying a fix.

In battle tested systems, humans still matter, but they are informed by instrumentation, not superstition. You see structured logs with consistent correlation IDs, metrics tagged by tenant and feature, and traces across service boundaries. When a team at a fintech company rolled out distributed tracing through OpenTelemetry and integrated it into their on call flow, their MTTR on payment routing incidents dropped from about 2 hours to under 15 minutes. Nothing magically removed bugs, but the system stopped relying on tribal knowledge to explain its own behavior.

5. Legacy couples tech and org charts; battle tested acknowledges Conway and works with it

Legacy systems often encode yesterday’s organization. Services are split by historical team boundaries instead of real domains. Abandoned modules still reflect old product lines. Ownership is unclear because the org has reorganized three times while the code kept its original shape. Every cross cutting change becomes a political problem: you need alignment from teams that do not actually care, so you get half migrations and proxy layers.

Battle tested systems are explicit about Conway’s Law. The architecture has been refactored at least once to align with the current org, or the org has been nudged to line up with stable domain boundaries. Ownership is visible in codeowners files, alert routing, and dashboards. When Shopify split their monolith into “componentized” subsystems with clear domain boundaries instead of a thousand microservices, they did it alongside changes in team structure rather than treating architecture and organization as separate conversations. The result was a system that could evolve without every change requiring executive arbitrage.

6. Legacy optimizes for shipping once; battle tested optimizes for operating forever

Legacy code often carries the marks of one big push. The primary optimization was shipping the first version on time, with reliability, debuggability, and operability as afterthoughts. You see hand rolled migration scripts that no one rehearsed, cron jobs whose failure modes no one monitors, and a runbook that still references an old staging environment. These systems eventually “work” in production, but only because humans build elaborate habits around their quirks.

Battle tested systems treat “operate” as a first class verb. You see circuit breakers on remote calls, request budgets embedded in config, and staged rollouts that assume partial failure. A simple table shows the contrast:

| Aspect | Legacy focus | Battle tested focus |

|---|---|---|

| Release criteria | “Feature works” | “System behaves under load” |

| Monitoring | Basic host metrics | SLOs on user centric outcomes |

| Migrations | One off scripts | Idempotent, audited workflows |

| Rollouts | Big bang | Gradual, with automated rollback |

Teams that internalize this shift start asking “how will this fail in 18 months” in design reviews, not only “will this demo on Friday”.

7. Legacy hides risk, battle tested systems surface and constrain it

The most dangerous trait of legacy systems is not that they fail, but that they fail silently and in surprising ways. Risk is implicit. No one knows the actual downtime profile, the real recovery time, or which dependencies are single points of failure. Capacity limits are folklore. The code may be running on hardware or managed services that no one has audited recently.

Battle tested systems still have risk, but it is explicit. You have SLOs with targets and error budgets. You know which components are “tier 0” and which are allowed to degrade. Chaos experiments, even simple ones, reveal where the cliffs are. When Netflix publicized their chaos practices, the interesting part was not the tool, but the posture: assume failure, instrument it, and treat reliability work as a product line, not a side quest. That mindset is the difference between an older system that your org fears and an older system that your org trusts.

In practice, most of your important systems are a mix. Parts are legacy, parts are battle tested, and parts are in flux. The useful move is not to label something “legacy” and plan a rewrite, but to ask which of the gaps above hurt you most and invest accordingly. Make intent visible, tighten feedback loops, align architecture with the current org, and surface risk. Age is not the enemy. Unexamined assumptions are.

Rashan is a seasoned technology journalist and visionary leader serving as the Editor-in-Chief of DevX.com, a leading online publication focused on software development, programming languages, and emerging technologies. With his deep expertise in the tech industry and her passion for empowering developers, Rashan has transformed DevX.com into a vibrant hub of knowledge and innovation. Reach out to Rashan at [email protected]