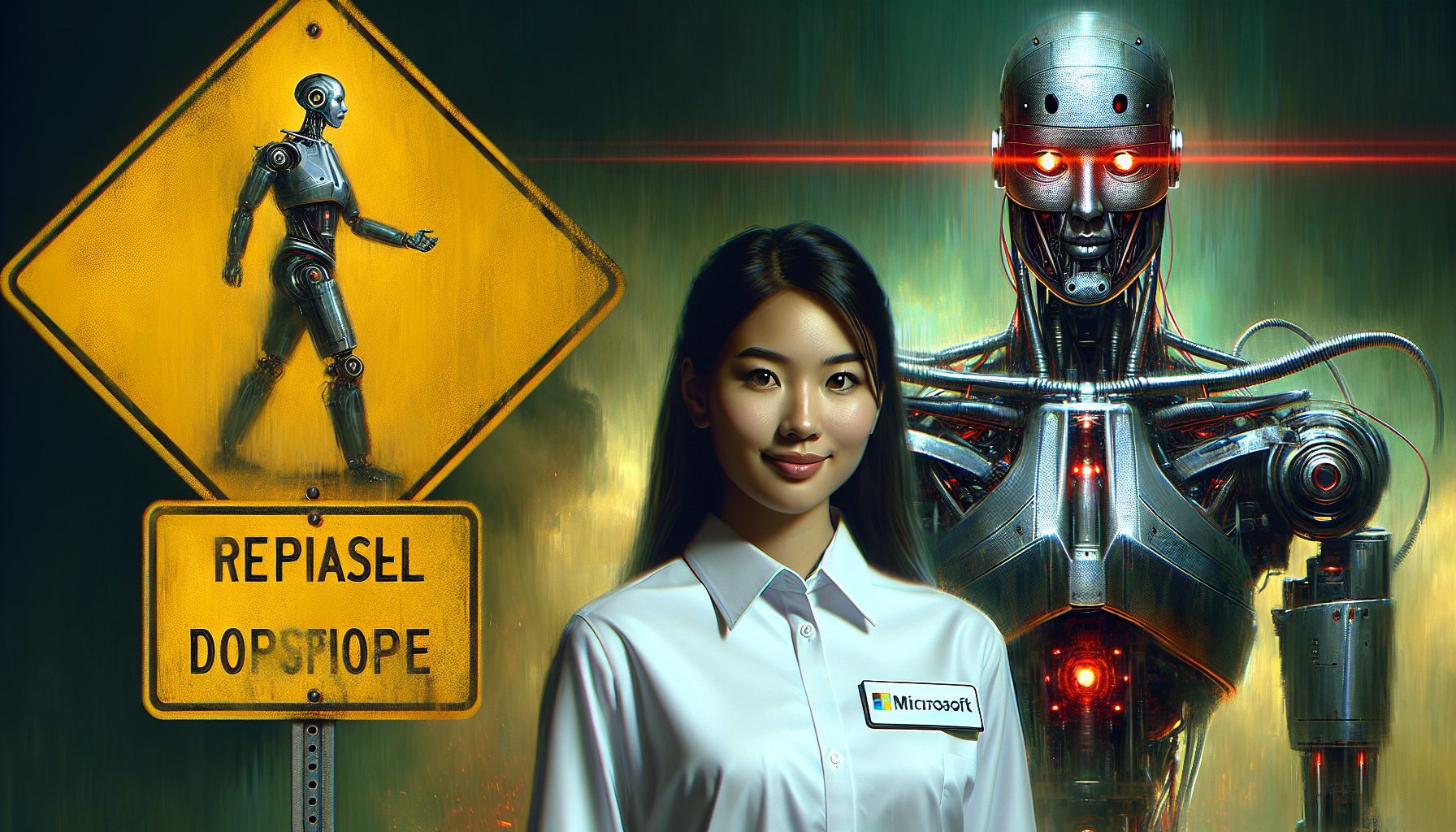

Shane Jones, a Microsoft engineer, recently issued a warning about the company’s AI tool, Copilot Designer, underlining its potential to produce inappropriate or harmful content. Although Microsoft is committed to improving the tool’s response to prompts, the current system needs substantial refinement to consistently filter inappropriate material.

Jones clearly expresses his concern about Copilot Designer’s potential to misinterpret innocent prompts, leading to the generation of explicit or offensively biased content. Users’ feedback becomes critical in this situation, helping the Microsoft team rectify these issues.

The engineer emphasizes the need for heightened vigilance, especially in the educational sector. His advice outlines potential dangers tied to data handling and misuse. Users are urged to exercise caution, scrutinizing their chosen digital tools to ensure they prioritize data security and privacy.

But it doesn’t stop there. Research findings underline risks related to the negligent use of AI tools. Jones highlights the need for proactive online safety measures, encouraging users to consider the concerns around Copilot Designer’s data handling procedures seriously.

Several of the technology’s shortcomings have been distinctly identified, including its inconsistency in reacting to prompts and the inappropriate interpretation of terms. Jones provided examples of the problematic results, such as inappropriate depictions from a “car accident” prompt or politically biased outcomes from a “pro-choice” prompt.

In his attempt to protect the users, Jones persistently appealed to Microsoft to suspend access to the AI tool until adequate safety measures were implemented. However, his efforts were met with indifference, leaving him to seek support from other tech leaders to amplify his concerns.

Despite the growing anxiety among users, Jones firmly believes that his relentless appeal would eventually instigate necessary protective measures. As a potential remedy, he suggests Microsoft change their app rating to “Mature 17+” and incorporate warnings about potential risks, a strategy used by other tech giants.

Though Microsoft markets Copilot Designer as an all-ages AI tool, Jones suspects the company may be aware of issues that could generate harmful content. The company representative responded by stressing their commitment to continually monitor and improve the AI’s functionality for maximum user safety.

Yet, Jones’s skepticism remains, indicating he believes that the inherent risks associated with AI are poorly understood and managed. The debate continues as the company insists on its commitment to learn, adapt, and uphold the highest standards of AI ethics.

Noah Nguyen is a multi-talented developer who brings a unique perspective to his craft. Initially a creative writing professor, he turned to Dev work for the ability to work remotely. He now lives in Seattle, spending time hiking and drinking craft beer with his fiancee.