You are staring at dashboards. CPU is high, queues are growing, p99 is blowing your SLO, and people are asking the classic question:

“Do we need to optimize the code, or is this a database or network thing?”

Under heavy load, every production system eventually hits one of two ceilings: CPU vs I/O. When a service is CPU bound, performance is limited by how quickly the processor can execute instructions. When it is I/O bound, most of the time is spent waiting on something external to the CPU, such as disk access, network calls, database queries, message brokers, or locks that control access to shared resources.

This article is about the hard part: deciding where to spend your optimization time. We looked at recent performance work from cloud vendors and system design guides, plus research on tail latency and off CPU profiling, to distill a playbook that senior engineers actually use in high load environments.

In conversations with performance engineers, a pattern shows up. Brendan Gregg, performance engineer and author of Systems Performance, has a consistent message that you should never guess at bottlenecks and should always let the data tell you where time is going. Gil Tene, CTO at Azul Systems, regularly reminds people that averages lie and that optimizations must be driven by tail latency behaviour at percentiles such as p99, not pretty looking averages.

Put together, the experts are saying: first measure, then classify the bottleneck, and only then choose CPU or I/O optimizations. The rest of this guide gives you a concrete way to do that in real systems.

The Real Question Behind CPU Versus I/O Tuning

At high load you rarely ask “Is this CPU or I/O” in the abstract. You are really asking:

-

Where is the time going inside a request path, under realistic load and tail latency targets?

-

Which resource will give me the biggest win per engineering hour: cores or external dependencies?

High load is where percentiles matter. If your API has a p99 of 200 milliseconds, that means 99 percent of requests complete in under 200 milliseconds and the slowest 1 percent are worse. Those slow ones are almost always where you hit hard limits, such as a saturated CPU, a database connection pool at max, or a noisy neighbour storage volume that is stalling writes.

So the real decision is usually:

-

If CPU is pegged and queues grow with load, optimize CPU or add cores.

-

If CPU has headroom but latency spikes and threads are waiting, optimize I/O or reduce dependencies.

Sounds simple. It is not, because real systems are messy and the bottleneck can move as you scale.

How To Tell If You Are I/O Bound Or CPU Bound

Before you touch a line of code, you classify the workload. There are three main signals: utilization, wait behaviour, and profiling output.

Here is a small cheat sheet to keep in your head or in your runbook.

| Signal | Mostly CPU bound | Mostly I/O bound |

|---|---|---|

| CPU utilization at peak | 80 percent or higher, run queue > core count | Under 60 percent while latency is bad |

| Thread or goroutine states | Many threads on CPU, few blocked on I/O | Many threads blocked on socket, disk, lock, or syscalls |

| Profiler flame graph | Tall stacks in application code on CPU | Large off CPU regions, hotspots in client libraries or drivers |

| Effect of more concurrency | Throughput improves until CPU saturates | Latency worsens due to more waiting on the same shared I/O |

| Effect of caching hot data | Helps a bit, but CPU still high | Often huge win, fewer round trips to external services |

1. Look at resource utilization under real load

Start by checking the obvious but do it under a realistic load test or real peak traffic, not your laptop.

For a typical Linux service you look at:

-

CPU user plus system percent and run queue length.

-

I/O wait and disk utilization for the storage volume.

-

Network throughput and retransmits or saturation.

Recent performance guides from testing vendors describe bottlenecks as occurring in CPU, memory, disk, or network layers, with typical culprits being inefficient code, slow queries, or misconfigured servers. If CPU is pegged while other resources look fine, that is an early hint. If CPU is low but I/O wait or network graphs look angry, lean toward I/O.

2. Inspect on CPU and off CPU time

Classical profilers show only what happens while code runs on the CPU. That works well when CPU really is the bottleneck. Modern research and tools add profiling of off CPU events which lets you see where threads spend time waiting on things like blocking I/O, locks, or schedulers.

In practice:

-

If on CPU flame graphs show tall stacks in your application code, compression, JSON encoding, crypto, or complex loops, that is CPU work.

-

If off CPU graphs show long stretches in

epoll_wait, database client calls, HTTP client reads, or lock waits, that is I/O or contention.

This is where Liz Rice, chief open source officer at Isovalent, often advises engineers to “understand not only what runs on the CPU but what is blocked waiting on the kernel”. That mental model is exactly what off CPU profiling gives you.

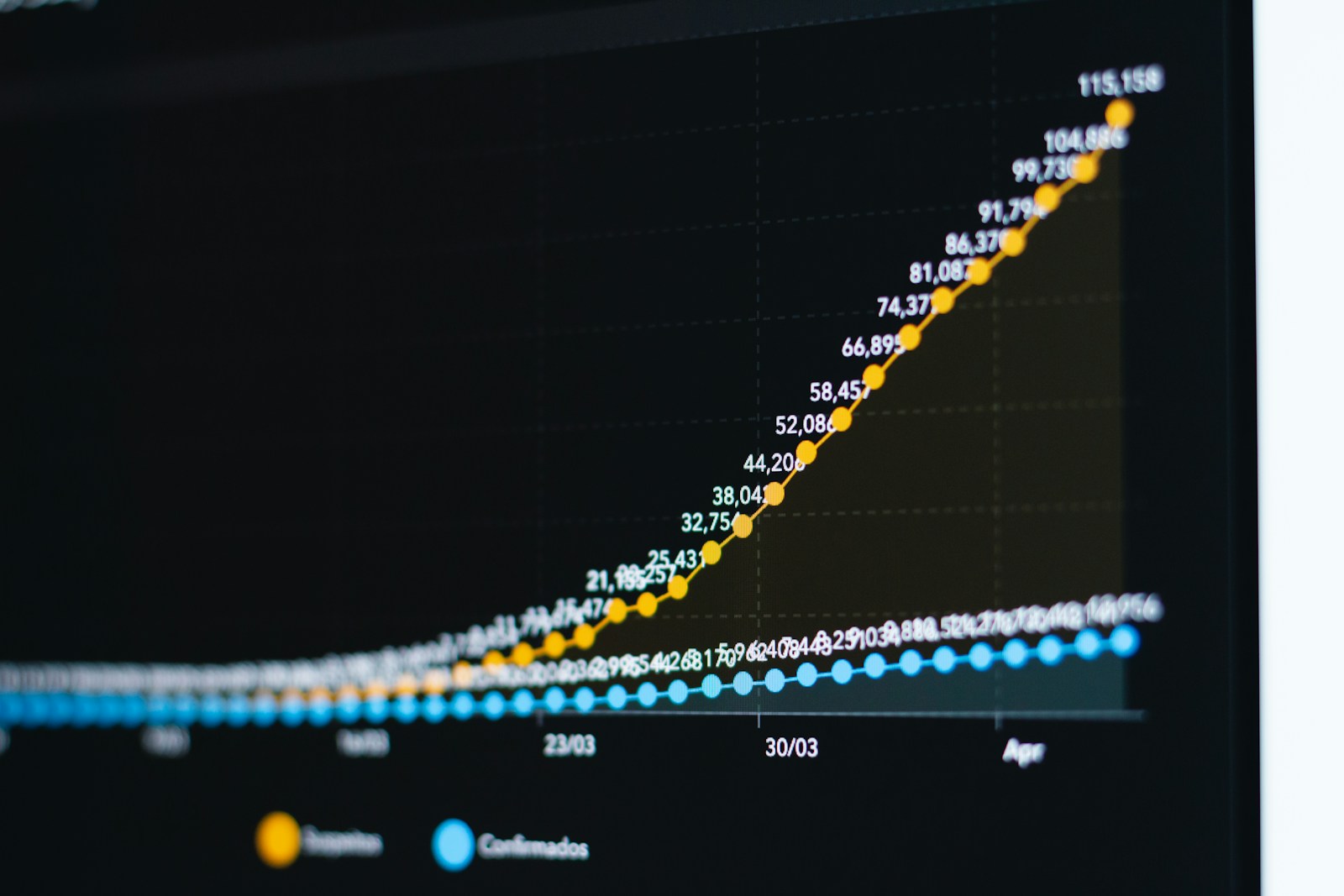

3. Watch how latency percentiles move with load

A very practical technique: run a load test and double the traffic every few minutes while watching p50, p95, and p99 latencies.

-

If latency grows more or less linearly with CPU utilization, you are likely CPU limited.

-

If latency stays fine until some threshold, then jumps sharply while CPU stays moderate, something in I/O is saturating or queuing.

Large scale studies of tail latency show that p99 can be 20 to 100 times worse than the median, which means that a small fraction of requests suffer very badly and users absolutely notice.

Why The Choice Matters So Much At High Load

Optimizing the wrong thing is not just a waste of time, it can make things worse.

If you are actually I/O bound and you micro optimize CPU, you might:

-

Run more work per request and actually hit CPU limits sooner.

-

Add complexity like manual threading or lock free structures that increase contention.

If you are CPU bound and you “fix” it by adding asynchronous I/O or more network calls, you might:

-

Increase overhead per request while the cores are already saturated.

-

Introduce more tail latency through extra hops and retries.

Back of the envelope guides in system design often show that total QPS is roughly cores / CPU time per request. If CPU time is already significant, the only sustainable fixes are either reducing that time or scaling out horizontally. If most of your time is spent blocking on a remote dependency, however, the ceiling moves when you add caching, better connection reuse, or more efficient queries.

So the “when” boils down to: optimize CPU when it is the limiting resource under your target load and SLO, otherwise optimize I/O. Everything below is about proving which case you are in.

A Step By Step Playbook For Choosing What To Optimize

Here is a practical five step routine you can apply to any high load service.

Step 1. Get a latency and resource baseline under load

Run a load test or capture data from a known busy period and freeze a snapshot:

-

p50, p95, p99 latencies per endpoint.

-

CPU utilization and run queue.

-

Disk and network I/O stats.

Use a simple back of the envelope approximation to tie this to capacity. Say your service has 16 cores and the average on CPU time per request is 10 milliseconds.

-

Each core can handle about

1 / 0.010which is 100 requests per second of pure CPU time. -

Sixteen cores give you roughly 1600 requests per second of CPU capacity.

If your load test shows you already hit 1500 requests per second at 80 percent CPU and latency starts to slide, there is a high chance you are CPU bound at that traffic level.

Step 2. Classify the bottleneck using profiling

Run a CPU profiler while reproducing the bad case. Look at the top functions and the “hot path” or flame graph.

-

If you see heavy sampling in application code, serialization, encryption, template rendering or business logic, this is CPU work.

-

If the top stacks are framework glue and you do not see much user code, that is a hint the time is actually spent elsewhere.

Next, use a tool that can show blocking I/O waits and lock contention, such as off CPU flame graphs, modern eBPF profilers, or OS specific tracers. Research on combined on and off CPU profiling shows this is key in modern systems where storage and networks are faster yet still cause long waits under certain patterns.

If off CPU graphs show long waits in database drivers or network syscalls, you are clearly I/O bound and should not start by hand tuning CPU.

Step 3. Run “what if” experiments that distinguish CPU from I/O

When the metrics are ambiguous, change the environment and see how the system reacts. A few quick experiments:

-

Add cores or a faster instance type. If throughput scales and latency drops, CPU was a limiting factor.

-

Add cache hits by warming cache or seeding hot keys. If latency plunges and CPU barely moves, you were dominated by I/O.

-

Increase connection pool sizes or parallelism to slow dependencies. If this helps until a new plateau then hurts, you have an I/O bottleneck that is now being fully exercised.

This is where Charity Majors, co founder of Honeycomb, often advises you to “treat production as the only environment that really matters and run experiments instead of debates”. The goal is to move from argument to evidence. (For more on how engineering leaders converge on measurement-driven decisions, see leaders listen for tech recommendations from key roles first.)

Step 4. Choose your optimization lane and go deep

Once you know what you are, the playbook changes.

If you are CPU bound you usually:

-

Optimize algorithms and data structures and remove unnecessary work.

-

Batch expensive operations and reduce per request overhead like JSON encoding or encryption.

-

Enable compilation optimizations or JIT tuning and consider native extensions for very hot paths.

If you are I/O bound you usually:

-

Reduce round trips to external services with aggregation and caching.

-

Optimize database queries and indexes or move heavy reads to replicas.

-

Move to async or event driven models that hide I/O latency behind useful work.

The key is that you do not mix these randomly. You choose the dominant bottleneck and apply changes that directly target it.

Step 5. Re measure, re classify, and watch for bottleneck shifts

After a successful optimization, the bottleneck often moves. Maybe your I/O caching was so successful that the database is no longer the problem and CPU is now the limiting factor.

Run the same profiling and load tests again. If the picture changed, switch lanes. If not, you may need a deeper or more structural change such as moving a service closer to the data or splitting a monolith endpoint into separate pipelines.

Worked Example: Chasing A Latency SLO

Let us do a concrete scenario with numbers.

You run an API service with this SLO:

-

p99 latency below 250 milliseconds at 2000 requests per second.

Your production data at 2000 requests per second looks like this:

-

CPU utilization at 55 percent.

-

Disk utilization at 20 percent, network at 30 percent.

-

p50 latency 40 milliseconds, p95 120 milliseconds, p99 800 milliseconds.

The average and p95 look fine but p99 is terrible. Tail latency guides describe exactly this shape, where a small percentage of requests suffer heavy delays from queuing or rare slow paths in storage or networks.

You run profiling:

-

CPU flame graphs show that most requests do not spend much time on CPU.

-

Off CPU graphs show that many of the slow requests sit in the database client, waiting for a connection, then send a heavy query that sometimes locks a hot row.

You try two experiments.

-

Double the cores on the instance type.

-

CPU drops from 55 percent to 30 percent.

-

Latency percentiles barely change.

-

-

Add a write behind cache for a particularly hot lookup and improve indexes.

-

Database QPS for that table drops by 60 percent.

-

p99 latency falls from 800 milliseconds to 180 milliseconds.

-

Even though CPU now sometimes spikes to 65 percent during flash sales, your SLO is met. This is a classic case where average CPU had plenty of headroom and you were clearly I/O bound on a shared database. Optimizing CPU would have done nothing for the tail.

If you continue to scale and later see CPU hitting 85 percent at 4000 requests per second, the correct move might then be a round of CPU optimizations or horizontal scaling. The bottleneck changed, so your strategy must, too. (For a broader framework on aligning these engineering decisions with business priorities, see how to align tech investments with business outcomes.)

Common Traps And Mixed Bottlenecks

Real systems are rarely purely CPU or purely I/O bound. A few patterns show up frequently.

1. Hidden CPU work inside I/O libraries

Compression in HTTP clients, TLS handshake costs, JSON parsing in drivers, and metrics exporters can all burn CPU but show up under “I/O” looking labels. This is why you always look at full stack flame graphs rather than only high level metrics.

2. Lock contention that looks like I/O waits

If threads spend time blocked on synchronization primitives, your off CPU graphs will show waits that are neither network nor disk. In those cases, optimizing lock usage or data ownership patterns is more like CPU optimization than I/O tuning (for more on how contention surfaces in distributed systems, see race conditions in distributed caching).

3. Over concurrency for I/O workloads

Async frameworks make it easy to keep thousands of operations in flight while using a small pool of threads. For a truly I/O bound service that talks mostly to low latency caches, this can be perfect. For a service that often hits a slow database or external API, more concurrency can just mean more requests timing out together.

In all of these traps, the fix again is to measure where time is going under realistic high load and then decide which axis you are really constrained on.

FAQ: Quick Answers

How do I know if I should optimize CPU or I/O first?

If CPU is consistently above roughly 75 to 80 percent at your target load and on CPU profiles show hot code paths in your application, start with CPU. If CPU has headroom yet p95 or p99 latency is bad and you see many threads blocked on network or disk, start with I/O.

What if my service is both CPU and I/O bound?

That often means different endpoints or user flows have different bottlenecks. Use your tracing and profiling to segment by endpoint or feature, then fix each path separately. Avoid generic “framework wide” tuning until you know which flows matter most.

Can I fix an I/O bottleneck just by adding more CPU?

Rarely in a cost effective way. Extra CPU can hide some blocking by running more concurrent requests, but external systems such as databases or third party APIs usually become the limiting factor and you are only postponing the problem.

What metrics should I track long term?

Track latency percentiles (p50, p95, p99), CPU utilization and run queue, I/O wait, connection pool saturation, and error or timeout rates for downstream calls. Those together will tell you whether future regressions are CPU or I/O related.

Honest Takeaway

There is no magic rule like “optimize CPU first” or “always fix I/O”. The honest answer is that you optimize whichever resource is limiting your ability to meet a business SLO under real load, and you only know that by profiling both on CPU and off CPU behaviour.

If you walk away with one habit, make it this: whenever someone on your team says “this is CPU bound” or “this is I/O bound”, immediately ask “what profile or graph shows that”. Once you have that evidence, choosing when to optimize I/O versus CPU in a high load system becomes a concrete engineering decision instead of a philosophical argument.

Rashan is a seasoned technology journalist and visionary leader serving as the Editor-in-Chief of DevX.com, a leading online publication focused on software development, programming languages, and emerging technologies. With his deep expertise in the tech industry and her passion for empowering developers, Rashan has transformed DevX.com into a vibrant hub of knowledge and innovation. Reach out to Rashan at [email protected]