MIT researchers announced a technique that speeds up privacy-preserving training of artificial intelligence models on edge devices, aiming to make smart systems safer and more practical. The approach targets phones, sensors, and low-power computers that sit close to where data is created. The goal is to lift accuracy and cut energy use without sending raw data to the cloud. The promise is especially strong for clinics, schools, and field sites with tight budgets or unreliable networks.

The team described a new framework that focuses on training, not just running, models on-device. It is designed to keep sensitive information local while still improving the model with new data. The researchers say the method boosts speed, which has often held back private training on hardware with limited memory and compute capacity.

“Their new framework could enable more accurate, efficient, and secure AI models to be used in under-resourced settings.”

Why Edge Training Matters

Modern AI thrives on large datasets, yet collecting and centralizing personal information poses clear risks. Training on the device helps reduce exposure since raw records do not leave local storage. It can also cut network costs and lower latency for updates.

But on-device training is hard. Edge hardware has limited processing power and battery life. Privacy safeguards like noise injection and encrypted aggregation can slow learning. Communication between many devices can also strain slow networks.

The MIT technique targets these bottlenecks. By accelerating the underlying privacy-preserving process, the team aims to make local learning fast enough for real use. If successful at scale, organizations could update models more often and with less compromise on user privacy.

What the Researchers Claim

The researchers emphasize three outcomes: higher accuracy, greater efficiency, and stronger security for edge-trained models. The central idea is to upgrade the training pipeline so that devices contribute learning signals without exposing raw data and without heavy overhead.

- Accuracy: Better use of on-device data can reduce bias and improve predictions.

- Efficiency: Faster local updates can save battery and bandwidth.

- Security: Keeping data on-device limits the fallout from server breaches.

While the team did not disclose detailed internals here, the framing suggests a path that reduces computation and communication costs, common pain points in private edge learning.

Potential Impact in Under-Resourced Settings

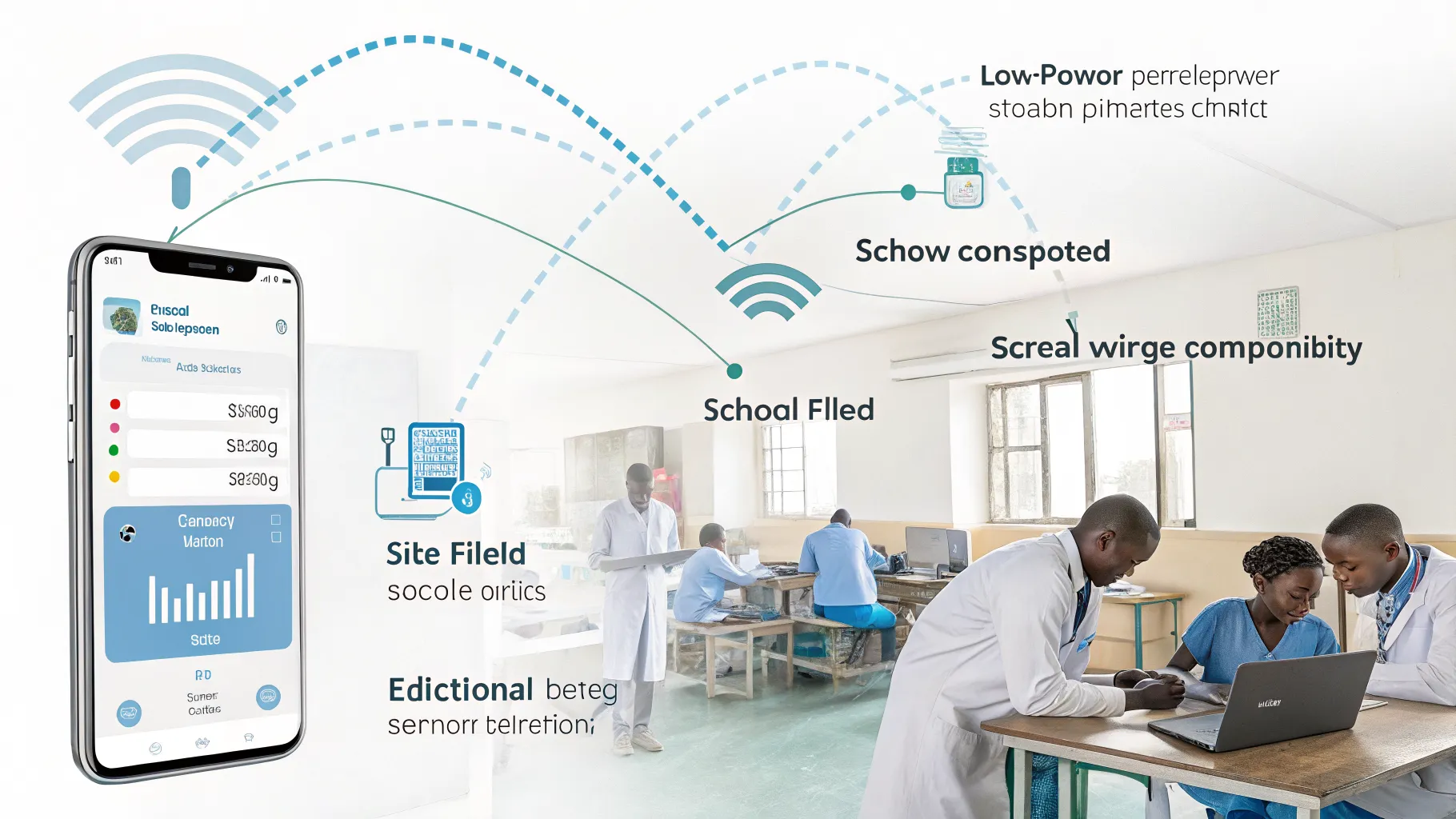

Local training can be a practical fit for clinics without stable internet, farms with remote sensors, or classrooms with shared tablets. Devices can learn from fresh data even when offline, then sync results later.

In health, a phone-based model might learn from new patient entries without sending sensitive records to a server. In education, reading apps could adapt to children’s progress on-device. In agriculture, field sensors could refine forecasts from local weather shifts.

These gains depend on careful design. Energy budgets are tight. Storage is limited. Any speedup that preserves privacy while lifting accuracy could make a clear difference where resources are scarce.

Balancing Privacy, Security, and Performance

Private training is not risk-free. Model updates can still leak information if poorly protected. Devices can be lost or tampered with. Strong safeguards and auditing are needed across the full pipeline.

There are also trade-offs. Stricter privacy settings may slow learning or reduce accuracy if not optimized. The MIT work responds to this tension by trying to cut overhead while holding privacy steady.

Independent validation will matter. External testing across device types, datasets, and network conditions would help confirm real-world benefits and limits.

What to Watch Next

Key questions remain. Will the technique generalize across vision, language, and sensor models? Can it handle millions of devices with spotty connections? How does it perform under strict privacy budgets?

Benchmarks against standard baselines would show whether speed gains come without hidden costs. Clear documentation, reproducible code, and open evaluations would build trust and help adoption.

Policy also looms large. As regulators press for stronger data protection, practical private training could help organizations meet rules without freezing innovation.

MIT’s announcement signals momentum for safer, faster training at the edge. If the gains hold, under-resourced settings could see smarter tools that respect privacy and work within real limits. The next step is proof at scale, transparent testing, and careful deployment. That will show whether private on-device training can move from promise to standard practice.

Rashan is a seasoned technology journalist and visionary leader serving as the Editor-in-Chief of DevX.com, a leading online publication focused on software development, programming languages, and emerging technologies. With his deep expertise in the tech industry and her passion for empowering developers, Rashan has transformed DevX.com into a vibrant hub of knowledge and innovation. Reach out to Rashan at [email protected]